Intellectual Sovereignty or Opaque Algorithms? The "Black Box" Dilemma That Only an Educational Technology Expert Can Resolve

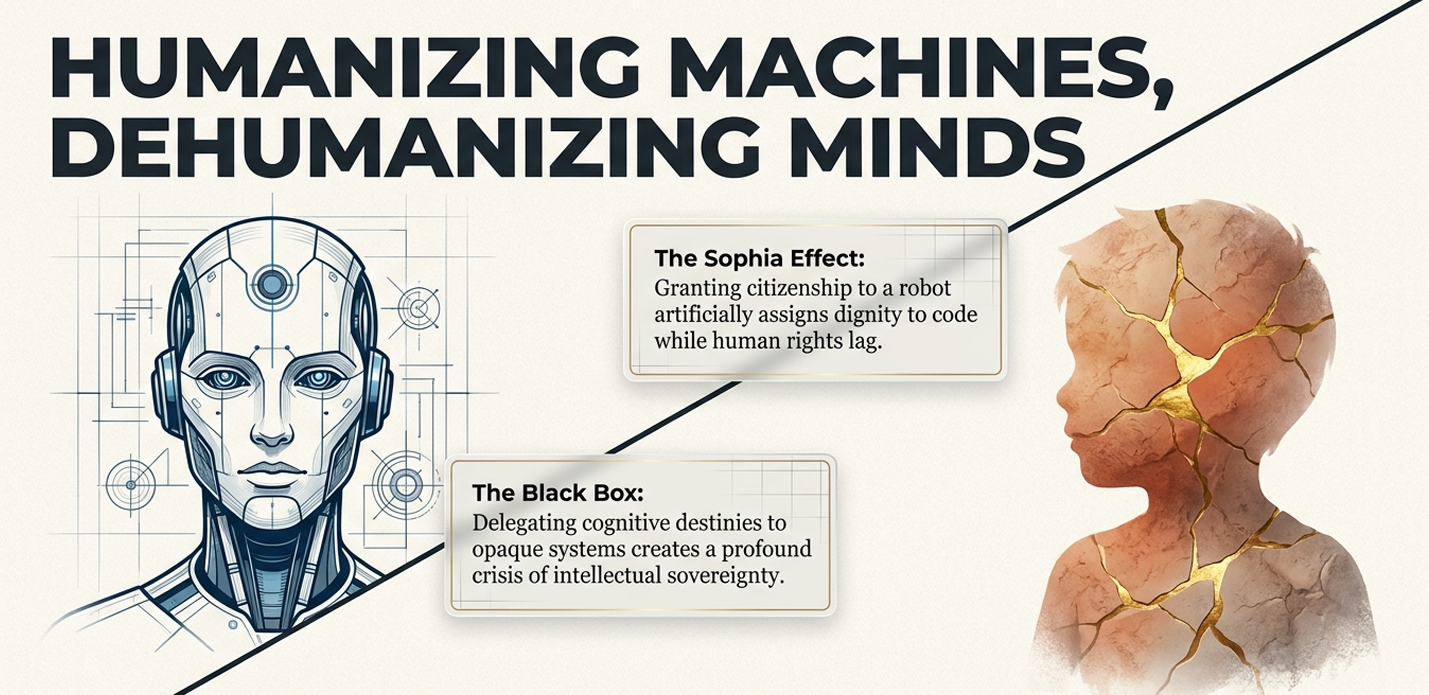

We are facing an unprecedented crisis of intellectual sovereignty: the "Black Box Problem," where institutions, teachers, and parents are delegating the cognitive destiny of future generations to algorithms whose internal logic is invisible and opaque. In this scenario, educational centers face a critical challenge for their educational branding: will they be remembered as mere data processing centers or as leaders in digital pedagogical innovation that protect the integrity of human learning?.

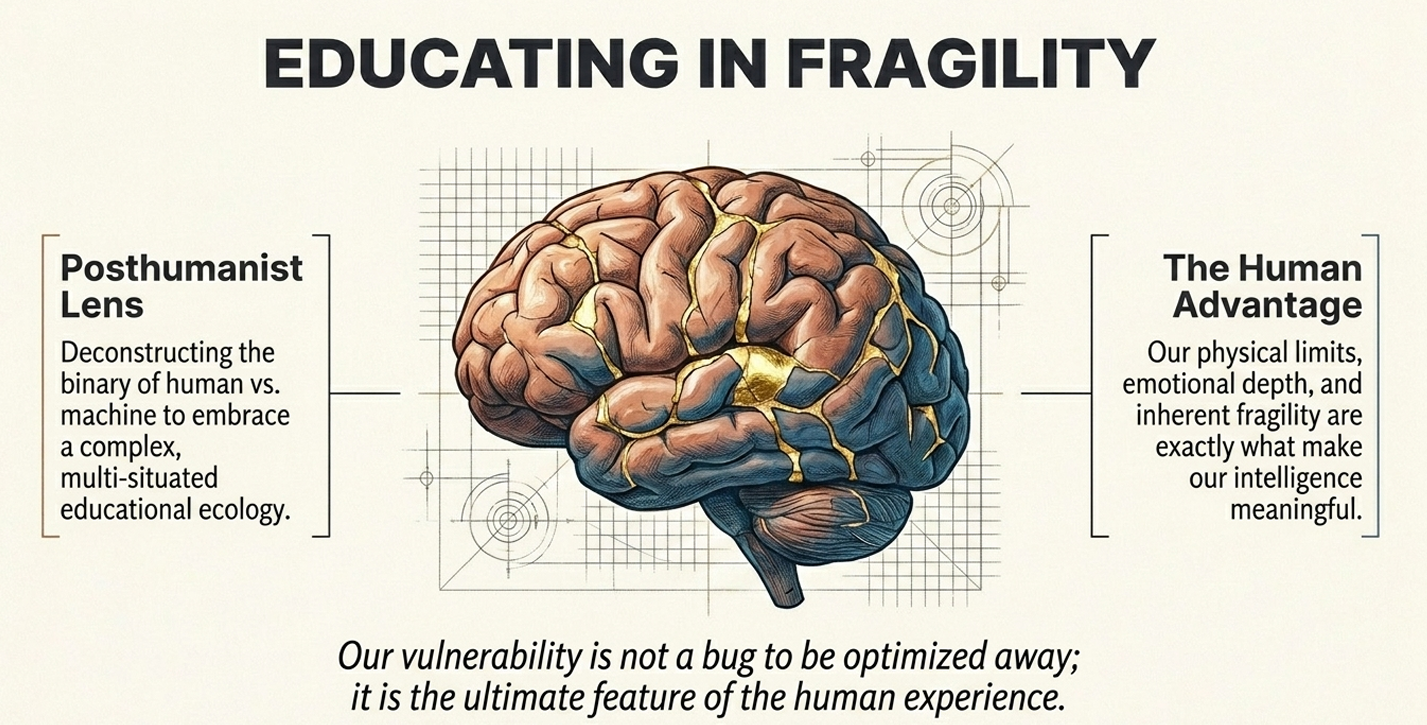

This ethical dilemma cannot be solved simply by filling classrooms with devices; it requires strategic educational technology consulting to act as the final ethical wall against blind automation. The risk is not that machines think like humans, but that humans end up processing reality uncritically. Excessive dependence on these tools threatens to dismantle the essence of learning, causing emotional disconnection and the erosion of critical thinking by accepting algorithmic "hallucinations"—false data generated by AI—as absolute truths.The intervention of a Graduate in Educational Technology is mandatory to orchestrate an "Augmented Education," where technology does not replace the human being but empowers them through ethical auditing, data governance, and transparency. The fundamental goal of this expert mediation is "preparing people to think critically, learn autonomously, and create with purpose in the age of artificial intelligence." Only through this professional guidance can we ensure that the advancement of algorithms becomes a catalyst for the greatest era of human creativity rather than a sentence for the subject's autonomy.

Is your institution fostering human intelligence or simply training more efficient peripherals for algorithms it doesn't understand?

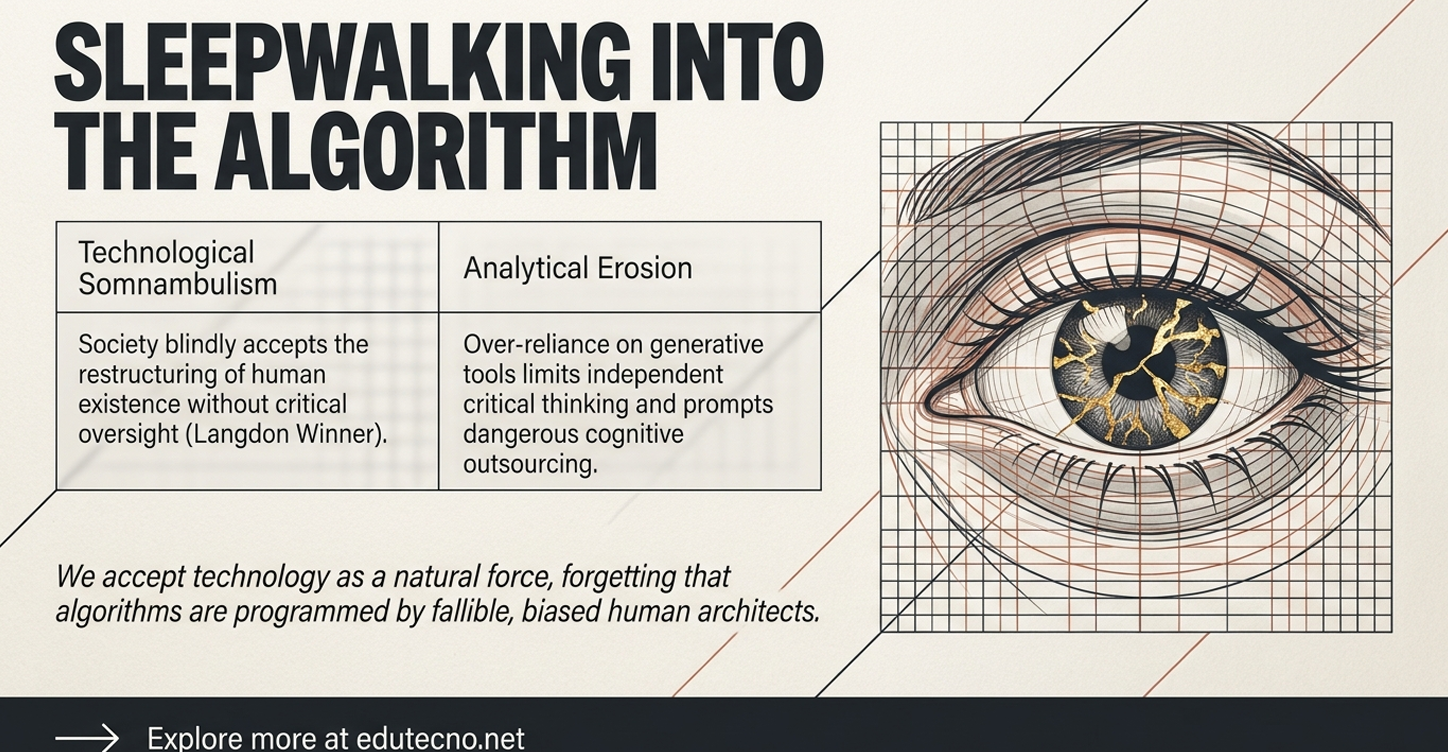

This is the central paradox facing education today: institutions are rushing to accumulate the latest software and devices, yet they risk falling into "technological somnambulism"—a state of walking sleep where the process of change is ignored and the use of technology becomes so naturalized that it is no longer questioned. While many schools believe they are modernizing, they may actually be delegating the cognitive destiny of their students to a "Black Box" of opaque algorithms, turning the classroom into a mere data processing center where students accept "hallucinations" as absolute truths and teachers are reduced to mere "prompt operators".

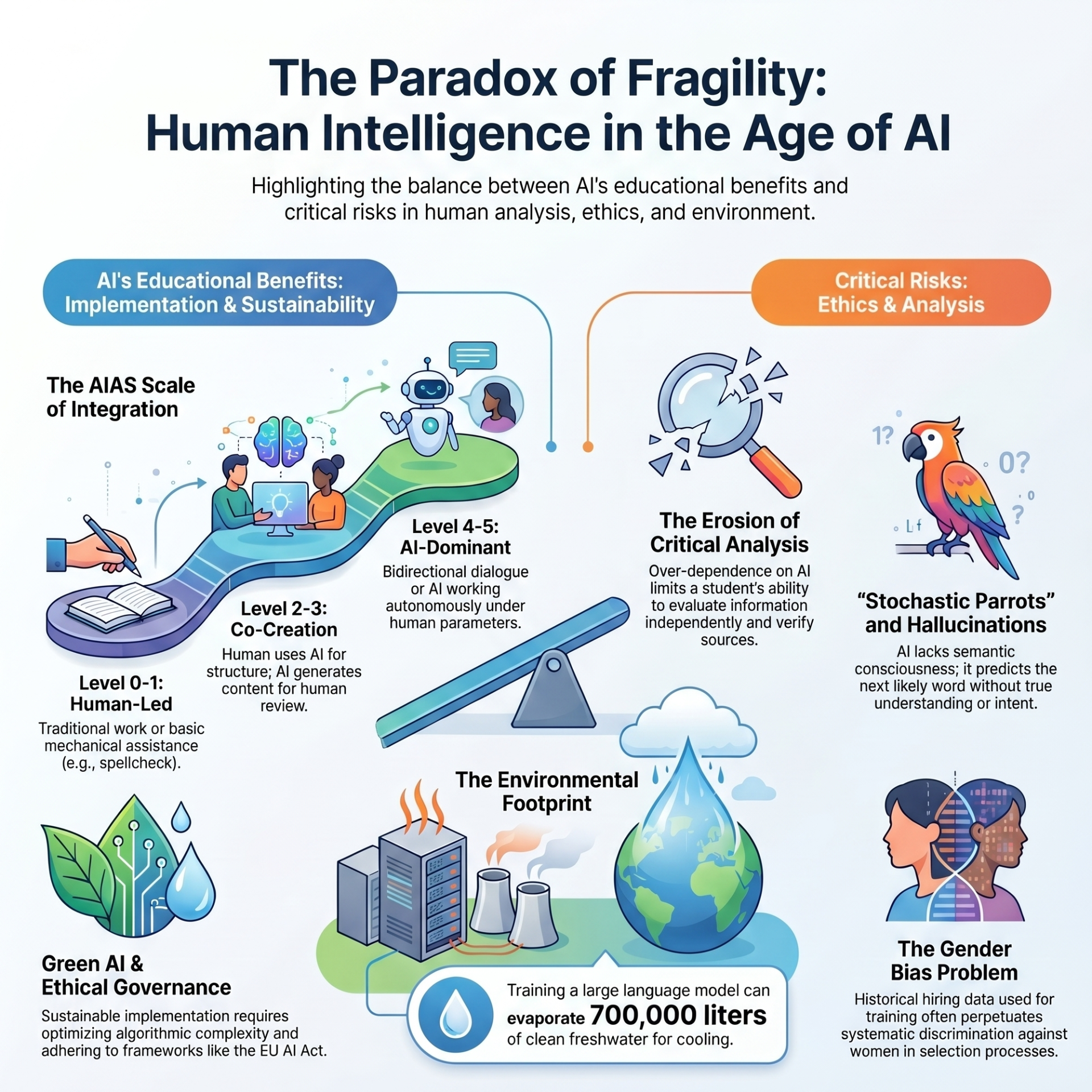

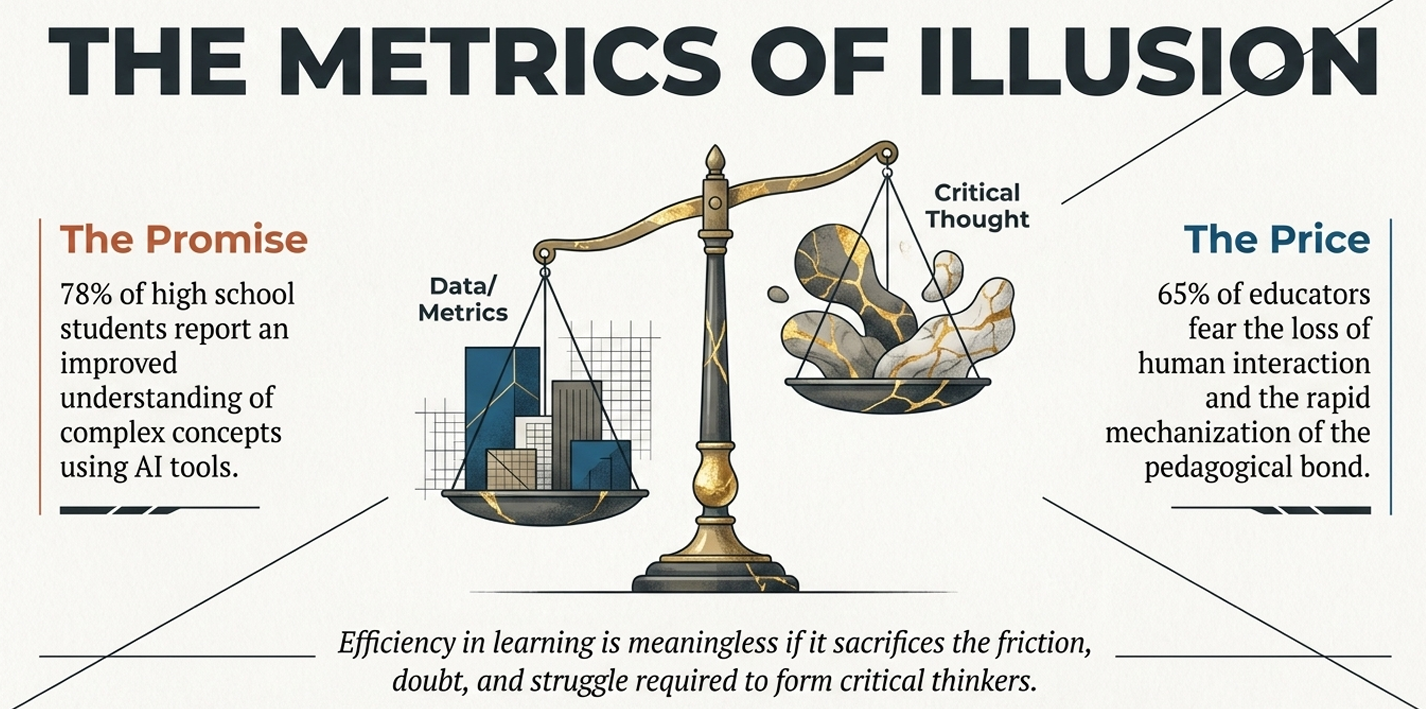

The current educational landscape is defined by a staggering contradiction: while 78% of students report significant improvements in understanding complex concepts through AI, many are navigating this shift without advanced technical or ethical guidance. Data from specialized studies indicates that the integration of AI tools has led to a 22% increase in academic performance compared to traditional methods, particularly in structured subjects like Mathematics (65% usage) and Biology (58% usage). However, this "modernization" is fragile; although 80% of teachers view AI's impact as positive, 45% admit to feeling insecure using these tools, and 50% report a critical lack of adequate training.

The urgency of this dilemma is further fueled by the "Black Box Problem," where institutions delegate cognitive development to algorithms whose internal logic is opaque even to their developers. This has made the topic viral on social media and a high-frequency search query for several reasons:

- The Fear of Intellectual Replacement: Millions of students now use Generative AI as a habitual resource, sparking global debates about whether we are training "simple peripherals" of an intelligence we don't understand rather than autonomous thinkers.

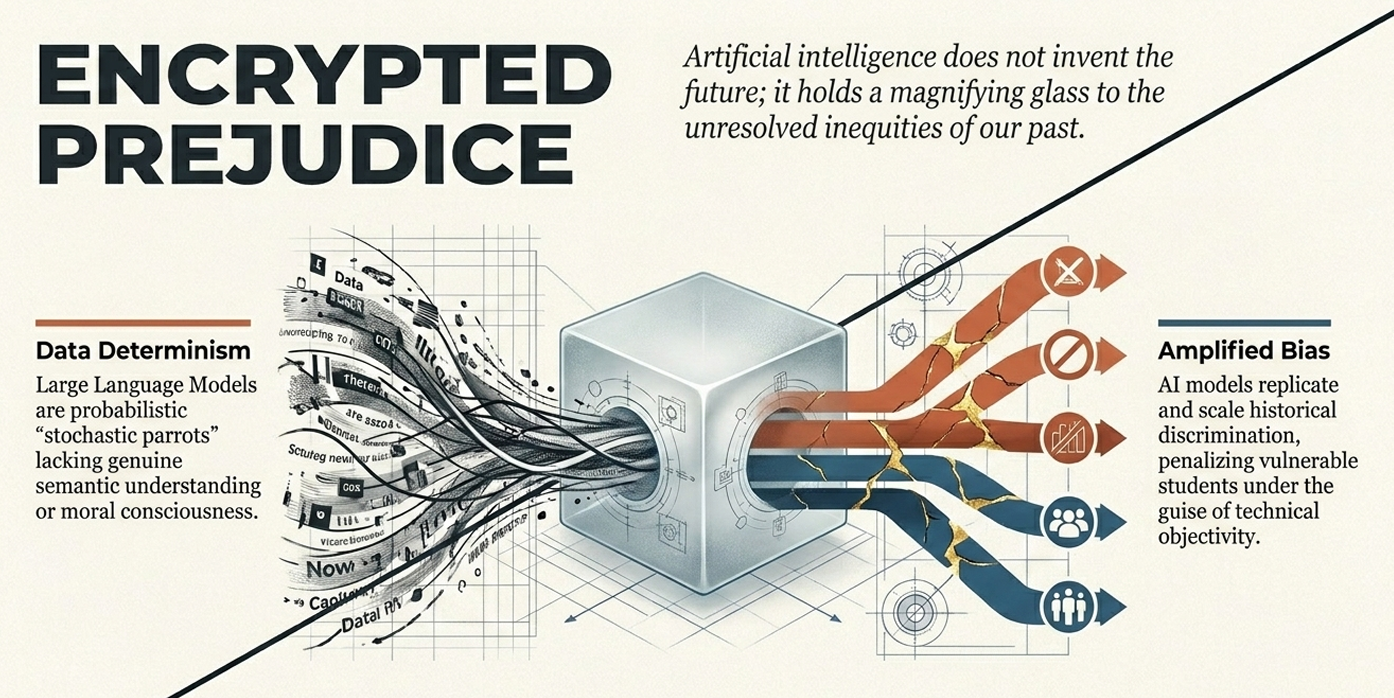

- Ethical and Social Biases: Viral content often highlights how AI can act as a systemic amplifier of inequalities, reproducing "encrypted prejudices" related to race, gender, and culture.

- Environmental Impact: High-visibility reports have exposed the "secret water footprint" of AI, revealing that ChatGPT consumes one liter of water every 100 questions, and training a single large model like GPT-3 can evaporate 700,000 liters of fresh water.

- The Literacy Crisis: There is an alarming trend where one-third of students fail simple questions because they can memorize but cannot truly "read" or process meaning, a gap that AI fills superficially but does not solve.

This dilemma is a "viral" phenomenon because it touches on the survival of human agency; we are at a tipping point where we must decide if technology will humanize society or deepen its structural violence.

Addressing the implementation of a strategic plan for digital pedagogical innovation often raises practical concerns. Below are the answers to the most frequent questions regarding its impact and execution:How is emotional development assessed and measured?

Assessing emotional and human growth in an algorithmic age requires moving beyond simple grades to qualitative and process-oriented indicators:

- Direct Observation and Checklists: Teachers use direct observation to monitor levels of engagement, empathy, and social commitment during collaborative tasks.

- Analysis of Produced Narratives: By reviewing student-created stories or journals (often assisted by AI), experts analyze how students differentiate between their own "sentient intelligence" and the machine's "statistical processing".

- Open Learner Models (OLM): This advanced analytical tool makes data visible to the student, fostering metacognition—helping them understand how they learn and feel during the process, rather than just what they produced.

- Perception Surveys: Bi-weekly surveys for families and students track changes in prosocial behavior, resilience, and anxiety levels related to technology use.

Can this model be replicated in other institutions?

Yes. The program is built on a modular and scalable architecture designed for digital pedagogical innovation:

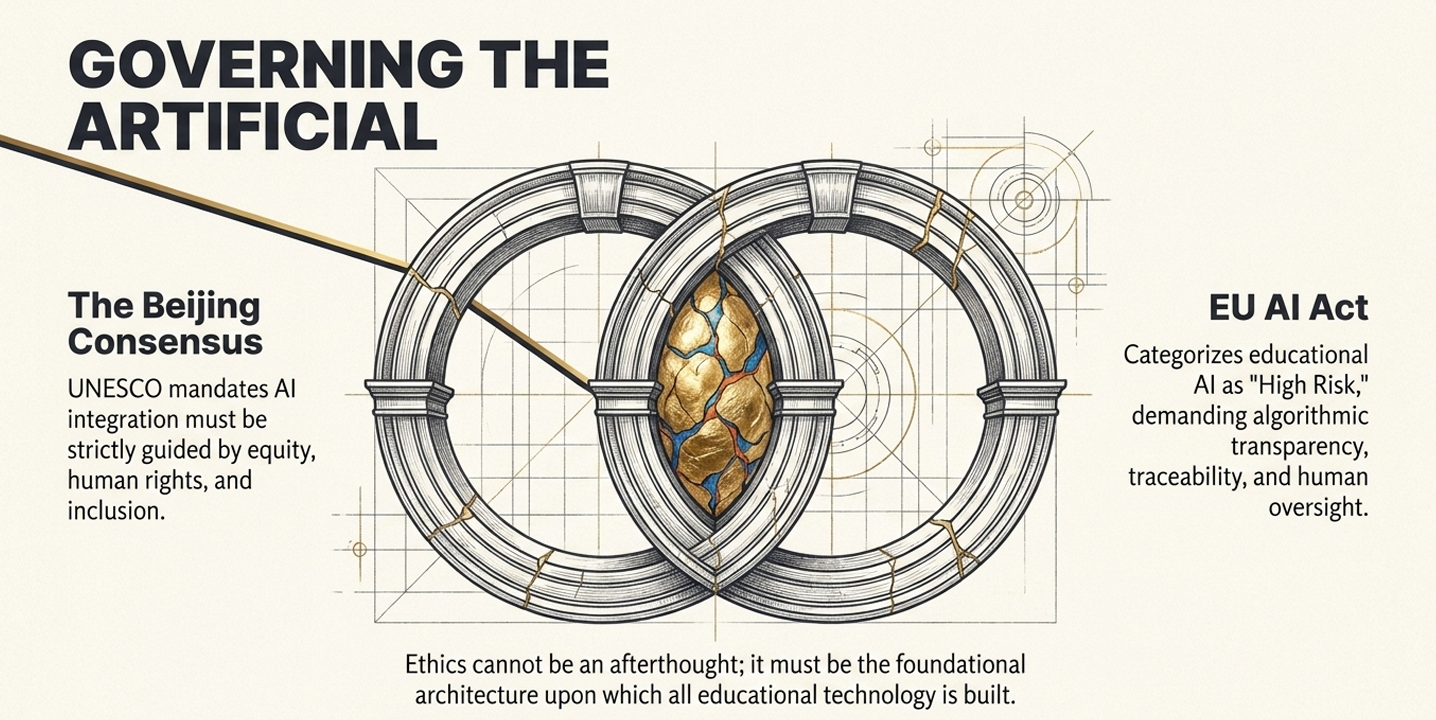

- Global Frameworks: It follows the UNESCO AI Competency Frameworks for both teachers and students, ensuring the modules meet international standards for social justice and sustainability.

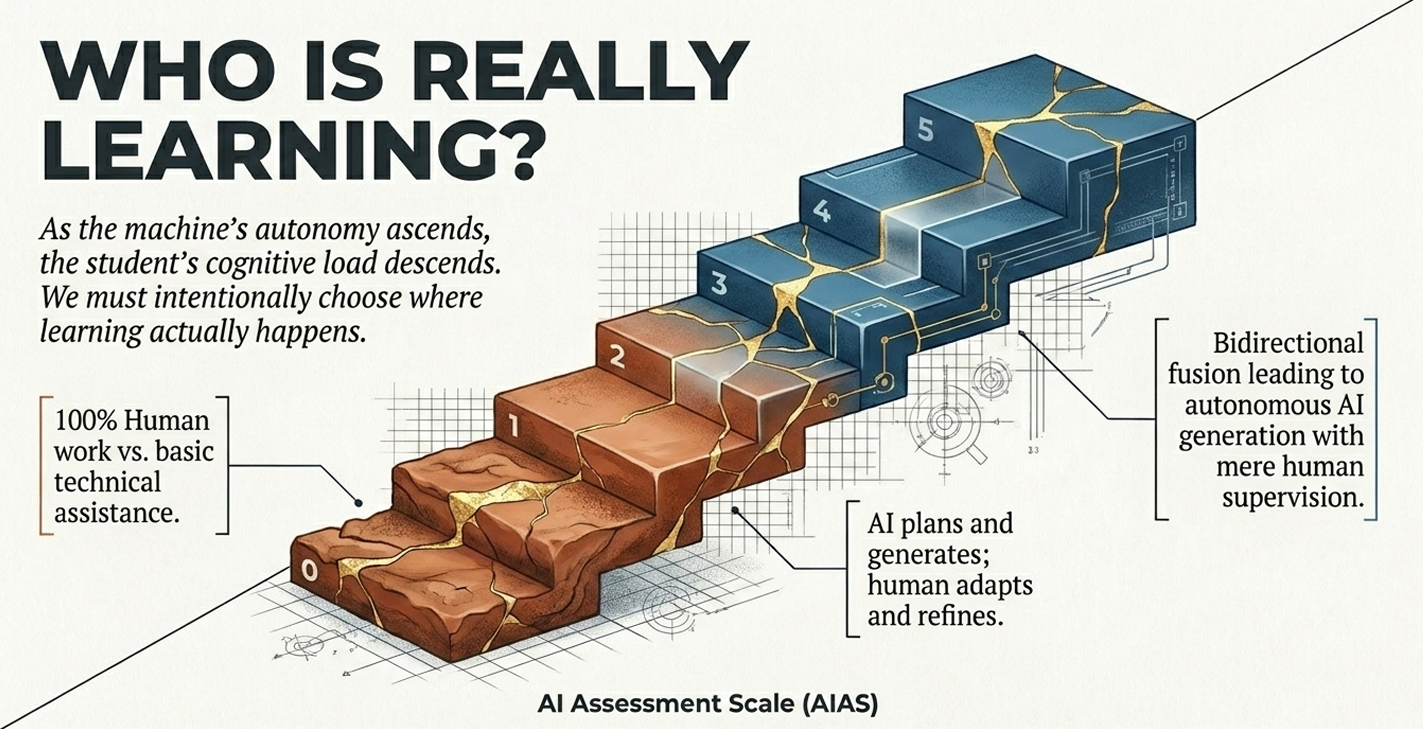

- Adaptable Difficulty (AIAS Scale): Using the Artificial Intelligence Assessment Scale (AIAS), institutions can replicate the model at different levels—from Level 1 (simple technical assistance) to Level 5 (human-supervised autonomous AI), depending on their specific needs and infrastructure.

- Modular Content: The curriculum—ranging from "Ethical Audits" to "Tinkering"—is designed to be integrated into existing subjects like Math, Biology, or History, making it compatible with various educational systems.

What resources are strictly necessary for implementation?

The transition to "Augmented Education" requires a focused set of resources to avoid technological somnambulism:

- Expert Facilitator: A Graduate in Educational Technology is essential to serve as the "ethical guardian," auditor, and pedagogical architect who opens the "black box" of the algorithms.

- Digital and Hybrid Spaces: A dedicated classroom space or "Digital Rincón" equipped with tablets/computers and stable internet, but also stocked with "maker" materials (clay, cardboard, recycled items) to maintain the physical-sensory connection.

- Meeting Time: A structured schedule, such as bi-weekly 60-minute sessions, is necessary to allow for the deep reflection and "tinkering" required for meaningful learning.

- Reliable Software: Access to verified platforms (e.g., Scratch, Python, or ethical AI assistants like EdutekaLab) that prioritize data privacy and transparency.

Educational Branding vs. The "Digital Mirage"

To understand the difference between a school that truly innovates and one that merely "walks in its sleep," we must contrast two different approaches to the AI revolution. One leads to a powerful educational branding centered on human agency, while the other results in a hollow "digital mirage" where the essence of learning is lost.The Story of "Digital Mirage Academy": Blind DigitizationAt Digital Mirage Academy, the administration believes they are pioneers because every student has a tablet and every teacher uses AI tools. However, they have fallen into "technological somnambulism"—a state where technology is naturalized and used uncritically.

- The Administrator's Trap: Principal Elena (a fictional archetype) focuses on "accumulating software." Without a Graduate in Educational Technology to guide the strategy, she has unknowingly turned her school into a data processing center. She celebrates high grades, unaware that her institution is delegating its students' cognitive destiny to "Black Box" algorithms whose internal logic is opaque.

- The Teacher's Crisis: Mr. Thompson, a history teacher, has become a mere "prompt operator". He feels insecure and lacks training. He watches as his students submit essays filled with "second-order hallucinations"—well-formatted AI texts that cite non-existent sources. He feels a profound loss of meaning; he is no longer a mentor, but a supervisor of a mechanistic process.

- The Student's Superficiality: Lucas, a student, has become a "peripheral" of the algorithm. He uses AI to bypass deep thought, leading to superficiality and an erosion of critical thinking. While he can "memorize and compute," he cannot truly "read" or grasp the meaning of complex concepts. This lack of engagement leads to a silent dropout—he is physically present, but his intellect has left the building.

- The Expert Intervention: The school hired an Educational Technology expert who acts as the "last ethical wall". They conducted an ethical audit of all AI tools, ensuring transparency, data governance, and the prevention of "encrypted prejudices".

- The Teacher as Architect: Mrs. Lee is not an information container; she is an "Architect of Experiences". She uses AI to automate administrative tasks, but focuses her classroom time on "Tinkering" and "Maker" activities that require students to "think with their hands".

- The Student with Purpose: Maya, a student, uses the Yusuf et al. (2024) Framework. When she uses AI, she must follow five phases: familiarization, conceptualization, inquiry, evaluation, and synthesis. She doesn't just accept AI output; she audits it. She uses Open Learner Models (OLM) to visualize her own learning process, fostering the metacognition that makes her smarter than the machine.

- Loss of Sovereignty: Schools that only digitize lose their intellectual sovereignty to commercial interests.

- Inequality: They accidentally amplify systemic inequalities by using biased algorithms.

- Crisis of Meaning: Eventually, students and teachers lose interest in an education that feels like a factory.

True educational technology consulting ensures that technology does not replace the human being but serves as a catalyst for the greatest era of human creativity.

The integration of Artificial Intelligence in education represents a clash between intellectual sovereignty and algorithmic opacity, necessitating the urgent intervention of specialized Educational Technology experts. To navigate this shift, institutions must move beyond "technological somnambulism"—the uncritical naturalization of digital tools—and embrace a model where technology acts as a catalyst for human creativity rather than a replacement for deep thought.

Key Learnings from the Sources

- AI is not neutral: Algorithmic systems carry "encrypted prejudices" and historical biases that can automate exclusion and amplify systemic inequalities.

- The "Black Box" Dilemma: Delegating cognitive processes to opaque algorithms threatens student autonomy and leads to the erosion of critical thinking.

- Sentient Intelligence vs. Statistical Processing: AI lacks semantic understanding and consciousness; it operates on statistical probability, often resulting in "hallucinations" or false data.

- Augmented Education: The goal is an orchestrative system where AI automates routine tasks (externalization) while humans focus on high-level reflection and creative synthesis.

- Environmental and Ethical Costs: The "secret water footprint" and carbon emissions of AI training require a commitment to "Green AI" and sustainable data governance.

Action Checklist for Administrators and Teachers

For Administrators:

- [ ] Appoint an Educational Technology Expert: Ensure a graduate in the field acts as the "pedagogical architect" to oversee ethical auditing and data governance.

- [ ] Establish Ethical Audits: Open the "black box" of existing software to ensure transparency, accountability, and the absence of discriminatory biases.

- [ ] Implement GDPR Compliance: Enforce strict data privacy protocols to protect student identities and personal information from commercial exploitation.

- [ ] Invest in Hybrid Infrastructure: Create spaces that support both digital innovation and physical "Maker/Tinkering" activities to maintain sensory connections.

For Teachers:

- [ ] Evolve the Role: Transition from an "information container" to an "Architect of Experiences" who curates human dilemmas and critical inquiries.

- [ ] Adopt Integration Frameworks: Use models like Yusuf et al. (2024) (familiarization, conceptualization, inquiry, evaluation, and synthesis) to ensure students are active analysts, not passive recipients.

- [ ] Promote Metacognition: Utilize Open Learner Models (OLM) to make learning data visible to students, helping them understand how they think.

- [ ] Verify and Audit: Instruct students to rigorously validate AI-generated sources to combat "second-order hallucinations" and academic fraud.

To further explore the "Black Box" dilemma and the critical role of digital pedagogical innovation through educational technology consulting, the following academic and institutional references are recommended:

- UNESCO (2024a/2024b). AI Competency Frameworks for Students and Teachers. These institutional reports provide a global roadmap for educational technology consulting, establishing essential dimensions for digital pedagogical innovation. They define the transition from basic technical assistance to human-supervised autonomous AI, rooted in principles of social justice and sustainability,.

- Cortina, A. (2024). ¿Ética o ideología de la inteligencia artificial? This specialized work addresses the core of the dilemma by defending intellectual sovereignty. Cortina argues for "cordial reason" and human agency, emphasizing that while AI can process data, only humans possess the moral autonomy to understand the meaning of their goals,,.

- Harari, Y. N. (2024). Nexus: A Brief History of Information Networks from the Stone Age to AI. Harari explores the urgent risk of humans becoming "simple peripherals" of an intelligence they do not understand. He warns that delegating cognitive destiny to opaque algorithms could lead to a loss of control over our cultural cocoon and democratic mechanisms,,.

- Egea Andrés, A. (2025). Posthumanismo y Educación Comparada: Hacia una Reconfiguración Epistemológica. This indexed article challenges traditional educational branding by analyzing the relationship between the body, technology, and learning. It proposes a shift from the "subject-as-center" to a "subject-as-assemblage," necessary for preparing people to think critically in a fragmented digital landscape,.

- Rubio Rubio, S. S., et al. (2025). Impacto de la Inteligencia Artificial en el Aprendizaje de los Estudiantes de Bachillerato. This research provides empirical data on the dilemma: while 78% of students report improved comprehension through AI, 45% of teachers feel insecure and 50% cite a lack of training. It highlights the necessity of expert intervention to prevent technological superficiality,,.

- Pradier, R. A. (2026). ¿El fin de la educación o el inicio de una era sin humanos? El dilema ético que solo un experto puede resolver. The foundational source for the "Black Box Problem," this article details why a Graduate in Educational Technology is the mandatory "last ethical wall" for institutions aiming to implement Augmented Education through transparency and data governance,.