Sovereign Innovation or Algorithmic Mediocrity: Is Your Institution Losing the AI Race?

Educational institutions have reached a critical breaking point where the massive, uncritical influx of artificial intelligence threatens to automate mediocrity and deepen systemic inequalities. This urgent dilemma presents a choice between the "fascination for the tool" and the necessity of sovereign pedagogical design, as many schools currently inhabit a "digital mirage"—investing heavily in software only to reproduce obsolete 19th-century instructional methods. The crisis is fueled by a staggering asymmetry: while nearly 87% of students already utilize AI, most faculty lack the training to integrate it meaningfully, resulting in the evaluation of "zombie ideas" that the technology has already rendered irrelevant.

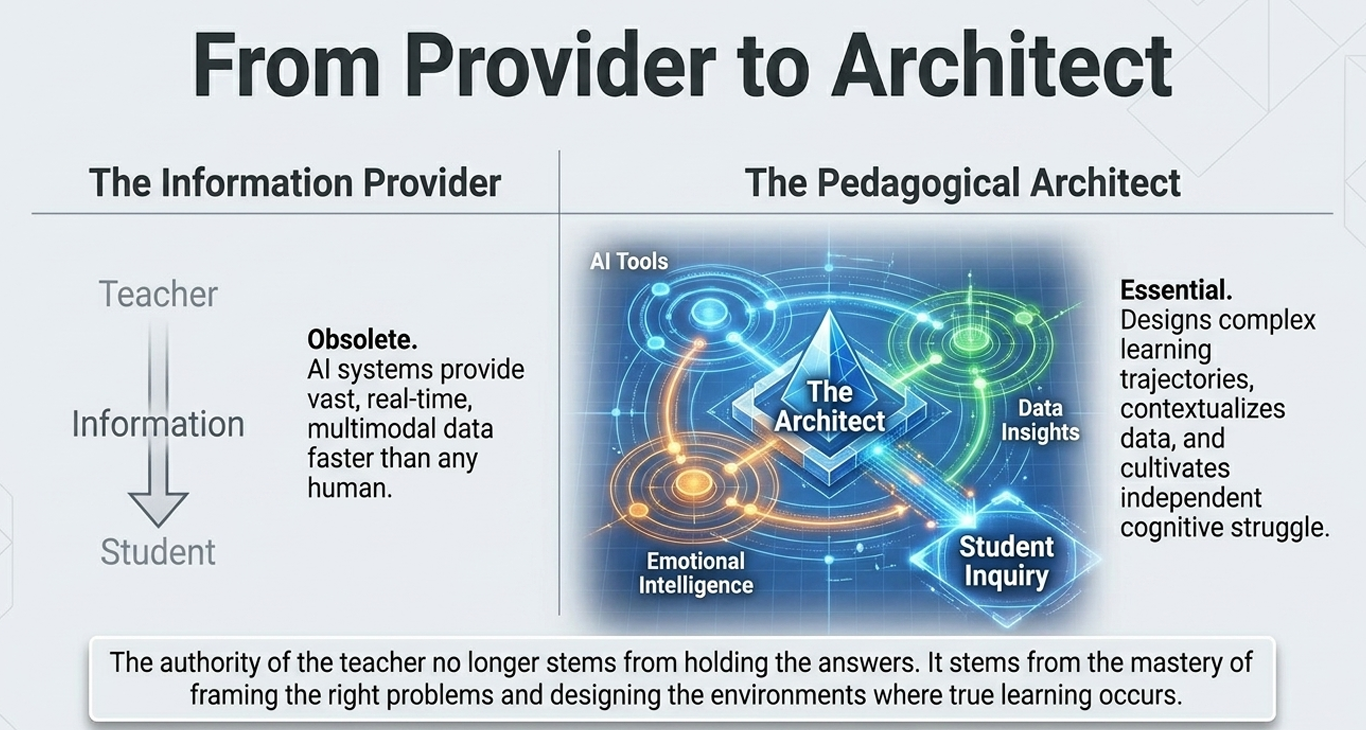

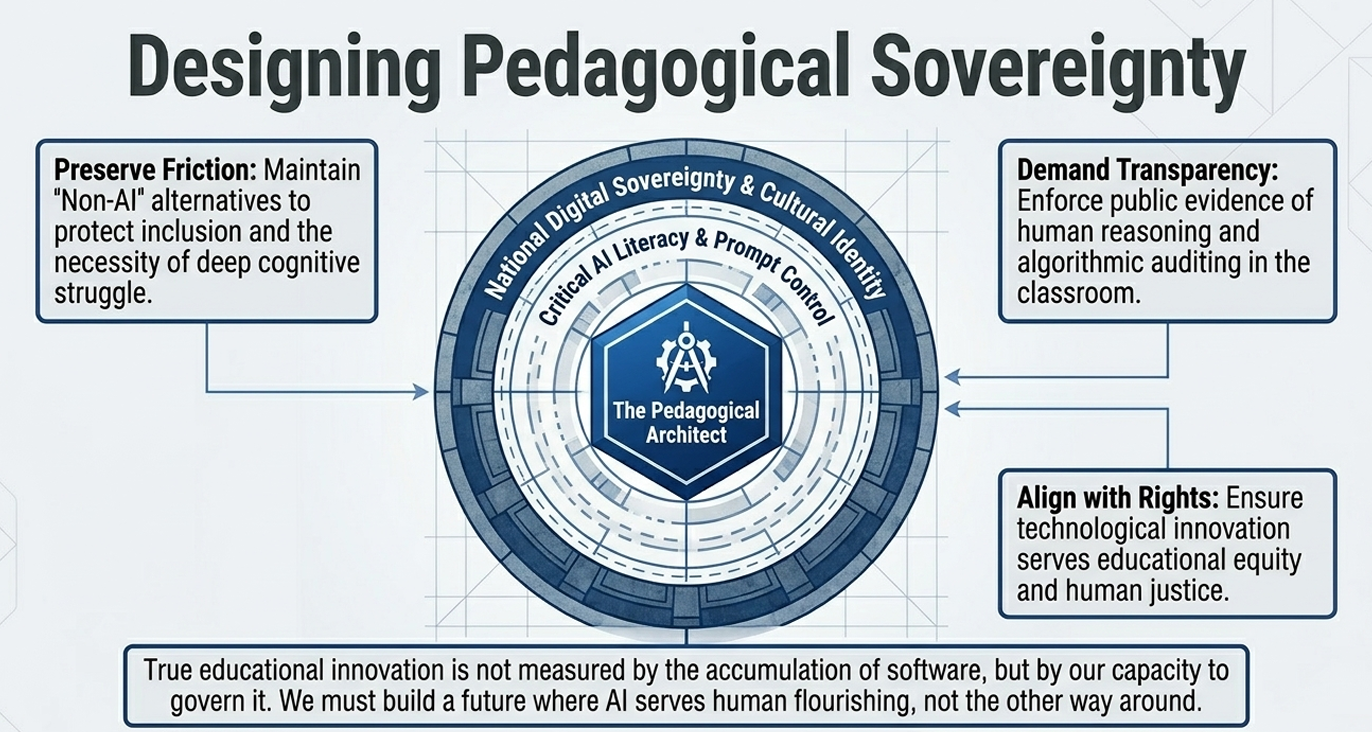

This structural collapse can only be resolved through the strategic intervention of a graduate in Educational Technology, acting as a pedagogical architect and epistemic mediator. Through specialized educational technology consulting, institutions can transition from mere digitalization to authentic digital pedagogical innovation. This transformation ensures that AI serves as a "critical prosthesis" that expands human cognitive boundaries rather than an "intellectual crutch" that atrophies reasoning and curiosity.

Ultimately, an institution's educational branding is no longer defined by the quantity of its software licenses, but by its commitment to a sovereign educational strategy. This professional leadership is essential for preparing people to think critically, learn autonomously, and create with purpose in the age of artificial intelligence, transforming the classroom into a space where technology is a tool for empowerment rather than a mechanism for data extraction or cultural dependency.

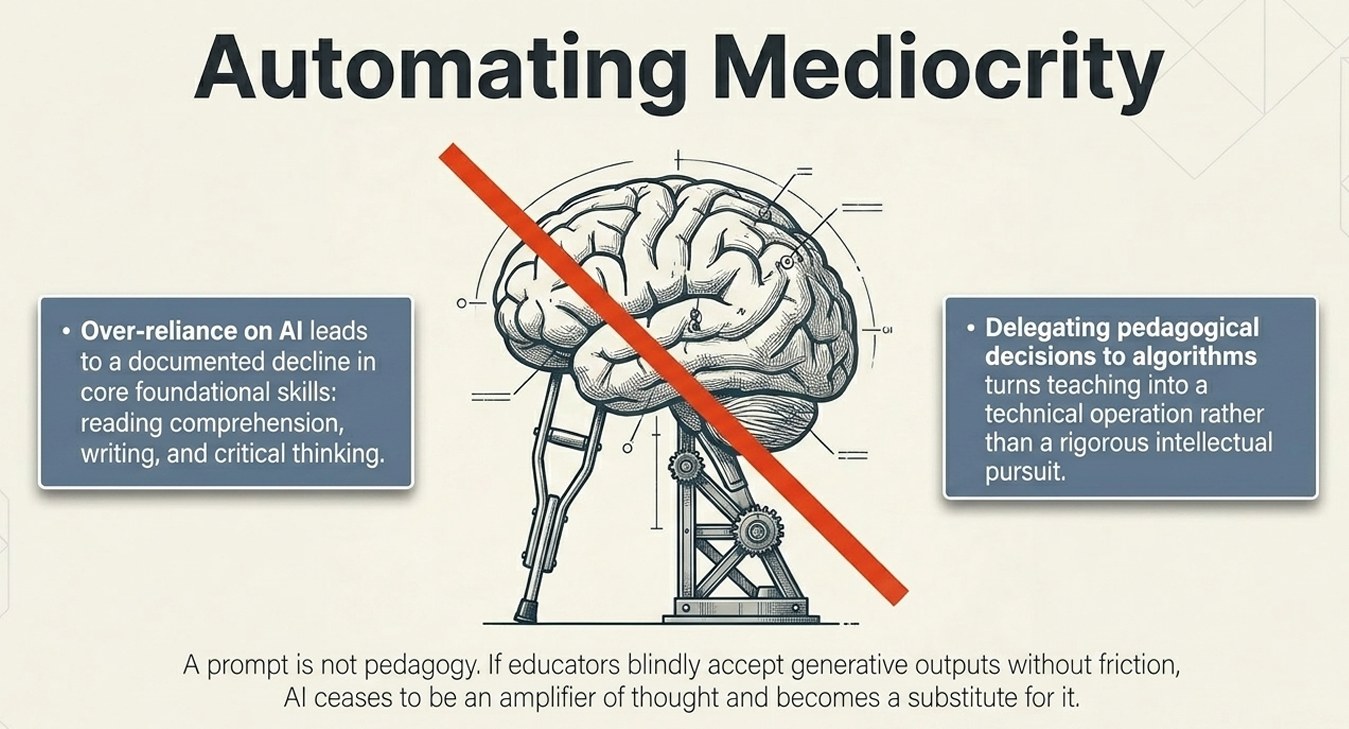

Does your institution truly educate for the future, or is it merely accumulating software while automating 19th-century mediocrity? This is the critical paradox of the digital era: schools are integrating high-tech tools at a record pace, yet they often use them to reinforce obsolete methods of repetition and memorization. By mistaking digitalization for innovation, many institutions fall into a "digital mirage"—buying software licenses while losing the sovereign pedagogical design required to prevent technology from becoming an "intellectual crutch" that atrophies reasoning. Are you fostering pedagogical architects of the future, or are you simply evaluating "zombie ideas" that an algorithm has already solved?

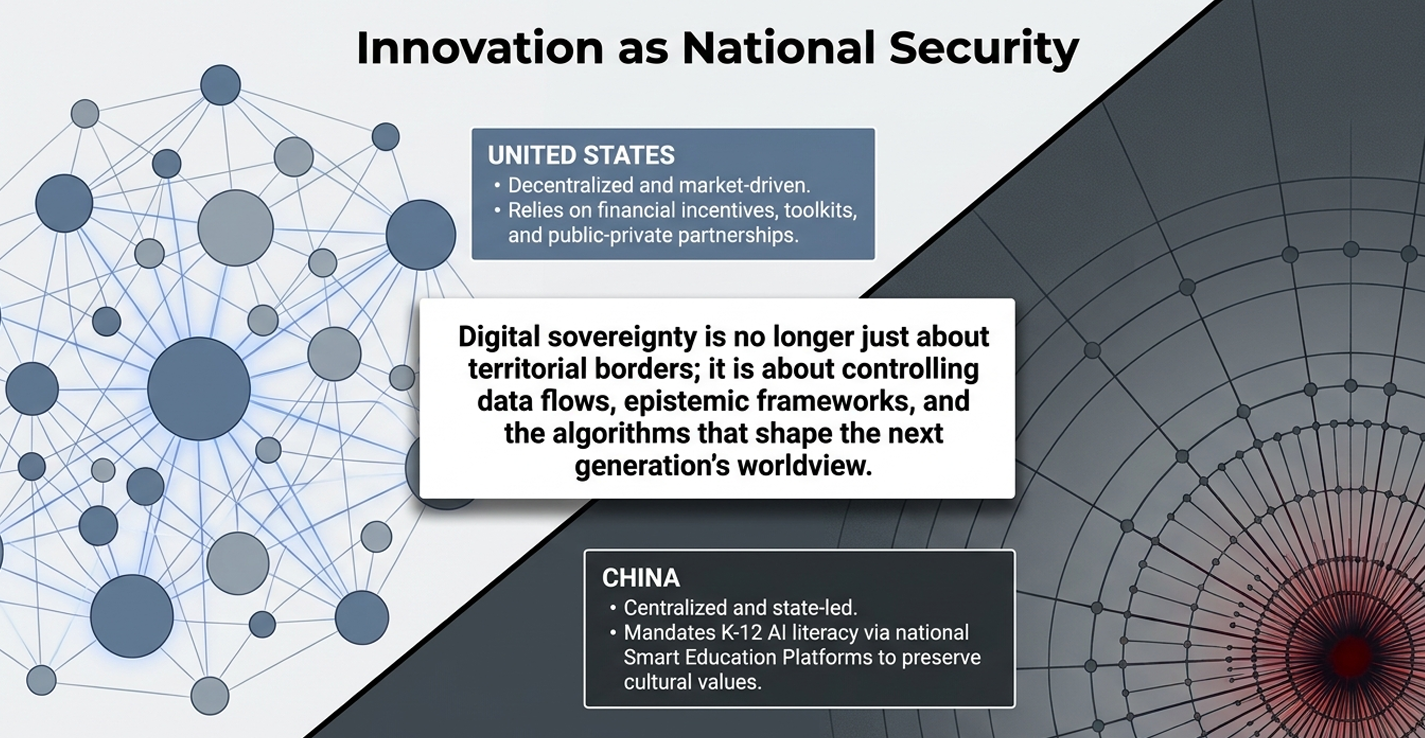

The urgency of the current educational crisis is driven by a staggering asymmetry in adoption and a global race for technological sovereignty. The following data and trends illustrate why the intervention of a pedagogical expert is no longer optional:

1. The Great Adoption Gap and adoption speed

- Historical Speed: ChatGPT has become the fastest-adopted technological innovation in human history, surpassing the growth rates of global platforms like TikTok and Instagram.

- The Usage Chasm: There is a profound "usage gap" between students and faculty. While reports indicate that 87% of university students already utilize AI programs, faculty integration lags significantly. For instance, among physics teachers, only 31.48% use it to prepare materials, while over half (52.37%) have only tested it to understand its basic functions.

- Institutional Mandates: Global powers are treating AI education as a matter of national security. As of 2025, Beijing has mandated an average of 8 hours of AI instruction across all K–12 schools.

2. Evidence of Structural Fragility

- Foundational Skills Crisis: In contexts like Argentina (Aprender 2024), only 14.2% of final-year high school students reach a satisfactory level in mathematics. The uncritical arrival of AI risks acting as an "intellectual crutch" that masks these underlying deficiencies rather than solving them.

- The Double Digital Divide: Technology is deepening existing inequalities. In some regions, 31% of rural schools still lack basic connectivity, ensuring that AI benefits remain concentrated in affluent sectors.

- The Dependency Trap: The "nested dependency" on a handful of global technology providers creates massive risks for national digital resilience, as evidenced by large-scale outages that disrupt banking, healthcare, and government services simultaneously.

3. Why this Problem is Viral and Highly Searched

This dilemma dominates social media and public discourse because it triggers "magic thinking" and a fascination with the tool. The ability of AI to "narrate" and solve complex problems in seconds challenges traditional notions of authorship and human expertise.

The conversation has become viral for three primary reasons:

- The Threat of Replacement: It touches the sensitive fear of teacher replacement by automation.

- Integrity Crisis: It presents a constant threat to academic integrity and the definition of plagiarism.

- Technological Solutionism: It feeds a popular but erroneous belief that technology can independently resolve deep-seated educational problems, leading many to search for "quick fixes" through prompts rather than pedagogical redesign.

Without a graduate in Educational Technology to act as a mediator, institutions remain trapped evaluating "zombie ideas"—obsolete methods that AI can already solve—instead of fostering authentic human reasoning.

Measurable Impact: How is emotional development assessed? Assessing impact in an AI-integrated environment requires moving beyond standardized testing toward indicators that capture public evidence of reasoning and the quality of human-AI interaction. Key indicators include:

- Perception and Utility Surveys: Based on the theory of diffusion of innovations, measuring how students and teachers perceive the utility, compatibility, and ease of use of these tools helps determine their integration into the "pedagogical heart" of the institution.

- Narrative and Process Analysis: Instead of just evaluating the final result, indicators focus on authorship and verification logs. Students are assessed on their ability to explain why they accepted or rejected specific AI suggestions, demonstrating critical thinking and emotional maturity in their decision-making.

- Cognitive and Emotional Resilience: Tracking the transition from using AI as an "intellectual crutch" to a "critical prosthesis" that expands the student's ability to solve complex, situated problems.

Scalability: Can it be replicated in other institutions? Yes, the framework is designed to be highly adaptable and modular, regardless of the institution's starting point:

- Shift from Content to Process: Because the role of the pedagogical architect focuses on how to learn rather than what to teach, the model can be replicated across different educational levels and subjects.

- Adaptable Governance Models: The strategies used by global powers show that implementation can scale through centralized platforms (like national smart education systems) or decentralized, incentive-based grants that allow individual schools to innovate within their own contexts.

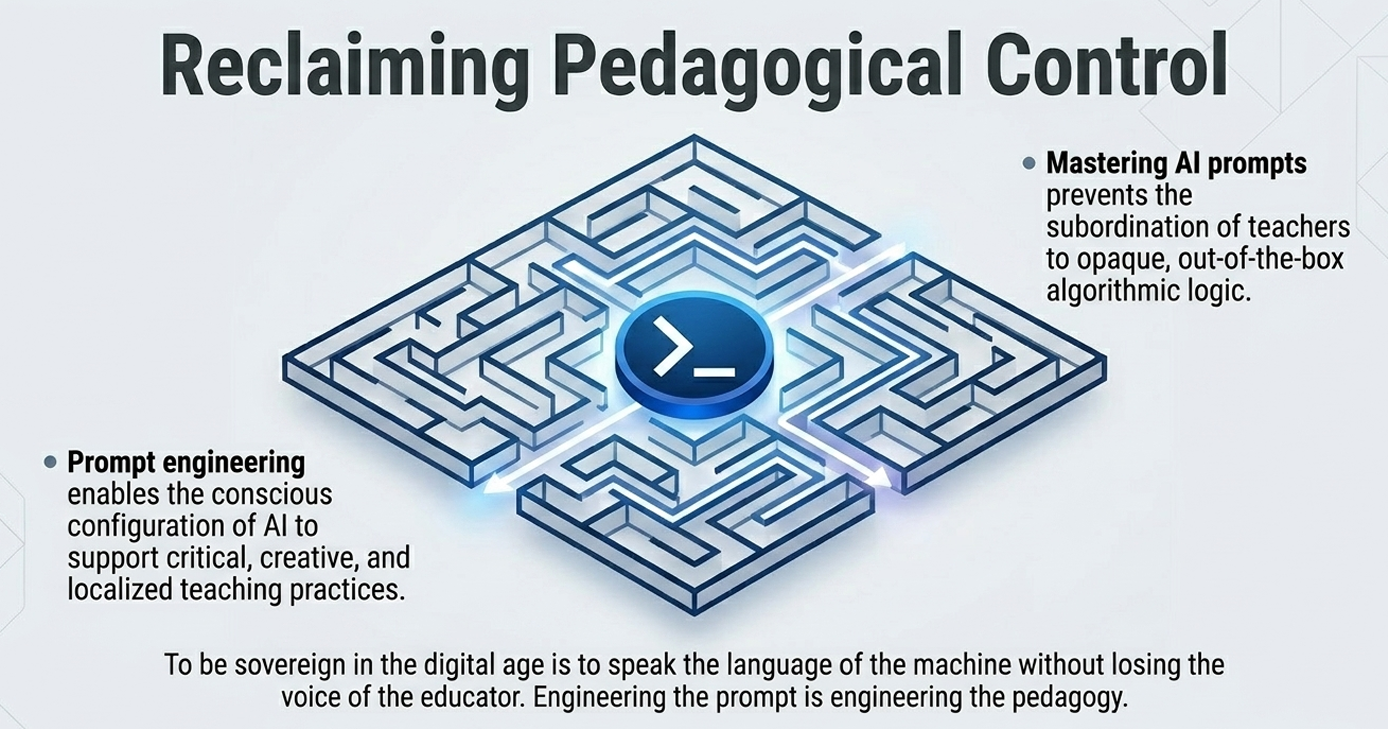

- Modular Competencies: Skills such as critical AI literacy and prompt engineering act as universal "educational toolkits" that can be integrated into any existing curriculum.

Necessary Resources: What is required for implementation? A successful transition to this model requires a strategic combination of human capital and infrastructure:

- Specialized Facilitator: The presence of a Graduate in Educational Technology to serve as a pedagogical architect and "epistemic mediator" who guides the design of learning experiences.

- Secure Digital Space: A robust infrastructure, such as an LMS or a Smart Education Platform, backed by a multi-cloud strategy to ensure national and institutional digital resilience.

- Strategic Meeting Time: Dedicated time for pre-service and in-service professional development, focusing on the ethical and pedagogical dimensions of AI rather than just technical training.

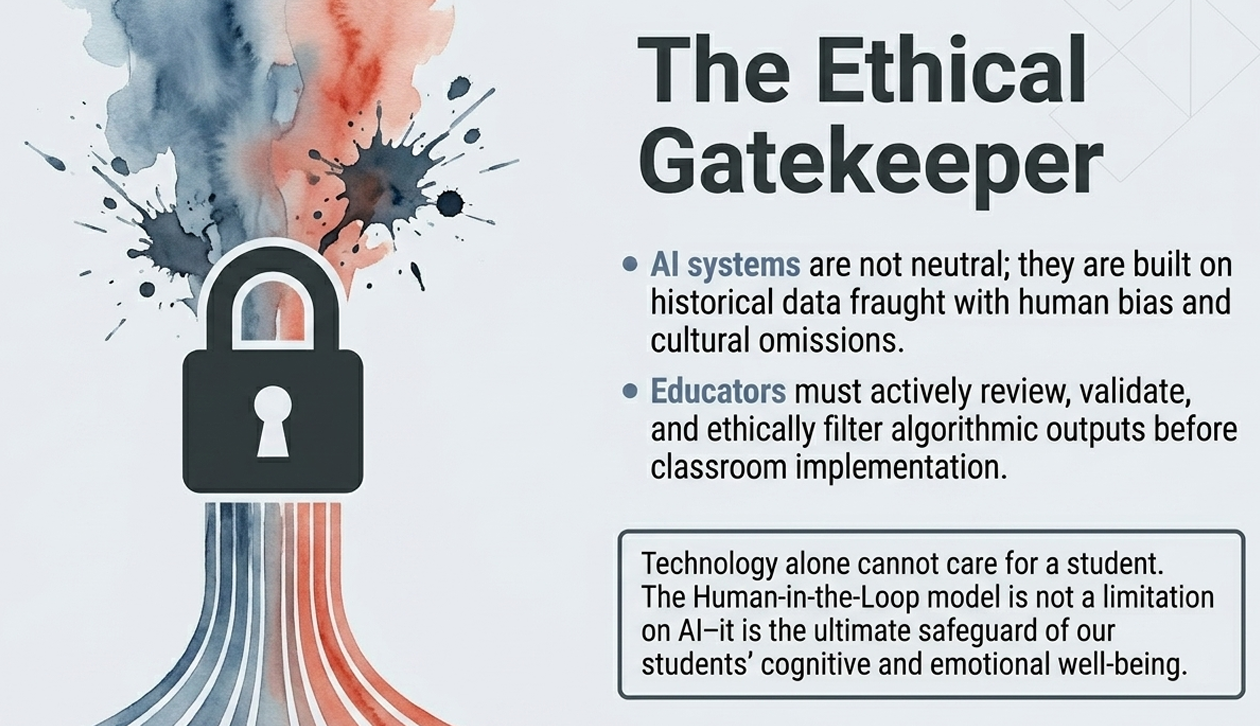

- Ethical Institutional Framework: A clearly defined set of policies regarding data privacy, algorithmic transparency, and a commitment to human-centered decision-making.

From the Digital Mirage to Pedagogical Sovereignty

To understand the transformative power of a Graduate in Educational Technology, we must look past the screens and into the lived experiences of those inhabiting our schools. The difference between an institution that simply digitizes processes and one that builds educational branding with purpose is the difference between a factory of "zombie ideas" and a laboratory of human flourishing.

The "Digital Mirage": Automating the Past

In many institutions, a well-meaning administrator signs a check for thousands of software licenses, believing that "innovation" is something you can buy in a box.

- The Teacher's Burden: Without the guidance of a pedagogical architect, the teacher feels like a mere operator of non-transparent tools. They feel overwhelmed and threatened by an automation that seems to "flatten" the human complexity of their craft. To survive the workload, they might use AI to generate rigid lesson plans in seconds, but in doing so, they lose the meaning of their vocation.

- The Student's Void: The student quickly senses this lack of purpose. They fall into the "copy-paste" algorithmic trap, submitting work that is grammatically perfect but intellectually empty. This leads to superficial learning where students evaluate "zombie ideas"—obsolete methods that the technology has already solved—resulting in a profound loss of pedagogical sense and, eventually, a rise in dropout rates as the school becomes a place of mere data processing rather than growth.

The "Sovereign Institution": Designing with Purpose

In contrast, institutions that apply educational branding understand that their prestige lies in their commitment to digital pedagogical innovation, led by a specialist who acts as an epistemic mediator.

- The Empowered Educator: Here, the teacher transitions from a consumer of resources to a pedagogical architect. They no longer fear replacement; instead, they learn to create the "artisanal prompt"—a creative, intentional instruction that challenges the algorithm rather than obeying it. The teacher is the curator of an "AI-integrated classroom" where technology is a "critical prosthesis" that expands human limits.

- The Critical Learner: The student does not use AI to evade effort but to amplify it. They are taught to practice systematic doubt, verifying AI "hallucinations" and contrasting algorithmic outputs with local, situated reality. This creates antifragile learning: the student learns to think with criteria, creating work with a clear purpose and authentic authorship.

The Cost of Silence: The Need for Intervention

The absence of a professional educational technology consulting intervention is not just a technical failure; it is an ethical risk. Without an expert to "decolonize" the use of these tools, institutions risk committing "epistemicide"—silencing local knowledge and critical thought in favor of the efficiency of foreign algorithms. When technology is used as an "intellectual crutch," it atrophies the very reasoning it was meant to support, turning the school into a mechanism for reproducing inequality rather than a space for liberation.

This strategic conclusion synthesizes the transition from the "instrumental use" of technology to the intentional design of purposeful pedagogical experiences.

Summary of Key Learnings

- AI is not a neutral tool: It carries a "pedagogical bias" that, if left unaddressed, tends to reproduce traditional models of memorization and direct instruction.

- Inversion of the pedagogical order: Educational intention and didactic design must precede the tool; AI should be the means to materialize ideas, not the starting point.

- The "Pedagogical Architect" shift: The educator's role evolves from a mere "information provider" to a pedagogical architect and epistemic mediator who can contextualize and guide the construction of understanding.

- Combatting "Zombie Ideas": Traditional assessments and closed-response exams are vulnerable to AI; institutions must move toward "antifragile" evaluation centered on higher-order cognitive processes like creativity and argumentation.

- Cognitive Expansion vs. Atrophy: AI must function as a "critical prosthesis" that expands human boundaries and fosters autonomy, rather than an "intellectual crutch" that atrophies reasoning.

Action Checklist for Leadership and Faculty

For Administrators (Leadership & Branding):

- [ ] Establish an Ethical Framework: Define clear policies on data privacy, algorithmic transparency, and human-in-the-loop responsibility.

- [ ] Foster Purposeful Branding: Position the institution based on its ability to form citizens with cognitive sovereignty, rather than the quantity of software licenses it holds.

- [ ] Ensure Equity: Invest in infrastructure and training to prevent AI from deepening the digital and cognitive divide.

- [ ] Create Innovation Labs: Provide spaces for faculty to collectively experiment with AI and develop new pedagogical practices.

For Teachers (Didactics & Classroom):

- [ ] Design "Artisanal Prompts": Craft situated, creative instructions that challenge the algorithm and force students to go beyond the obvious response.

- [ ] Implement Systematic Doubt: Teach students to verify sources, contrast data, and detect AI "hallucinations" or inaccuracies.

- [ ] Prioritize the Human Connection: Use AI to automate administrative tasks, liberating time for personalized tutoring, empathy, and active listening.

- [ ] Redesign Assignments: Focus on open-ended, project-based problems rooted in local reality that cannot be solved by a simple "copy-paste".

Strategic Implementation Guide

- What to DO: Empower teachers to become curators of knowledge and master Prompt Engineering as a strategy to maintain pedagogical control.

- What to AVOID: The uncritical adoption of tools that merely "automate 19th-century mediocrity" or delegate essential pedagogical decisions to an algorithm.

- What to PRIORITIZE: Critical AI literacy and a sovereign educational strategy that prepares people to think critically and create with purpose in an automated world.

The following sources provide the academic and institutional foundation for the dilemmas regarding digital sovereignty, pedagogical architecture, and the ethical integration of AI in education:

- Hamadeh, S., & Amin, H. (2025). "AI, Education and Digital Sovereignty." Frontiers in Education. This paper analyzes how AI education has evolved from a simple innovation tool to a core instrument of geopolitical power, national security, and cultural sovereignty. It explores the "AI race" and the critical role of educational policy in maintaining a nation's ability to control its own digital assets and knowledge systems.

- Chinchilla-Valverde, J. L. (2026). "Between Promise and Paradox: Artificial Intelligence in Education, Ethics, and the Teacher's Role." Tecnología en Marcha. This specialized article argues that AI represents a "change of pedagogical regime," moving from simple automation to a horizon of human-AI co-agency. It emphasizes that the educational value of AI depends on strict ethical conditions, such as accountability, justice, and inclusion, while redefining the teacher as a curator of reasoning.

- UNESCO. (2021). AI and Education: Guidance for Policy-makers. As a primary institutional report, it provides an essential framework for ensuring that AI serves the Right to Education (SDG 4). It offers guidance on protecting data privacy and preventing algorithmic biases from deepening existing social inequalities.

- Urinboyeva, D. O. (n.d.). "From Information Provider to Pedagogical Architect: Redefining the Teacher's Sovereignty in the Age of AI." Modern Education and Development. This research details the transformation of the teacher's professional identity. It explores the shift from traditional knowledge transmission to the "Pedagogical Architect" model, where authority is derived from the ability to guide and contextualize information rather than just providing it.

- Radtke-Bederode, I., & Meireles-Ribeiro, L. O. (2025). "Educational Platformization with Generative AI: Impacts on Teacher Autonomy." Alteridad. This qualitative study analyzes specific educational platforms and warns against "uncritical adoption" that can subordinate teachers to biased algorithmic logic. It proposes Prompt Engineering as a vital competency for teachers to reclaim their professional independence and creative agency.

- "Pedagogical Sovereignty in the Face of Artificial Intelligence: Dilemmas and Challenges." (2026). Haciendo Tecnología Educativa. This article highlights the urgent dilemma between using AI as an "intellectual crutch" that atrophies human reasoning or as a "critical prosthesis" that expands cognitive boundaries. It advocates for specialized educational technology consulting to ensure technology remains a tool for empowerment rather than a mechanism for standardizing mediocrity.