The "Bullshit Degree" Trap: Is AI-Driven Auto-Cannibalism Destroying the Soul of Higher Education?

This urgent dilemma confronts an industry where students use AI to write papers and professors use it to grade them, creating a cycle of "simulated learning" that threatens to render traditional degrees meaningless. This crisis of institutional auto-cannibalism—where universities promote the very tools that undermine their educational purpose—can only be resolved through the strategic intervention of a graduate in Educational Technology.

Acting as the "architect of governance," this specialist moves beyond improvised automation to lead educational branding and digital pedagogical innovation by implementing rigorous ethical frameworks and transparency. Through expert educational technology consulting, they replace mechanical "black box" algorithms with a human-centered architecture. Their mission is the only path forward: preparing people to think critically, learn autonomously, and create with purpose in the age of artificial intelligence.

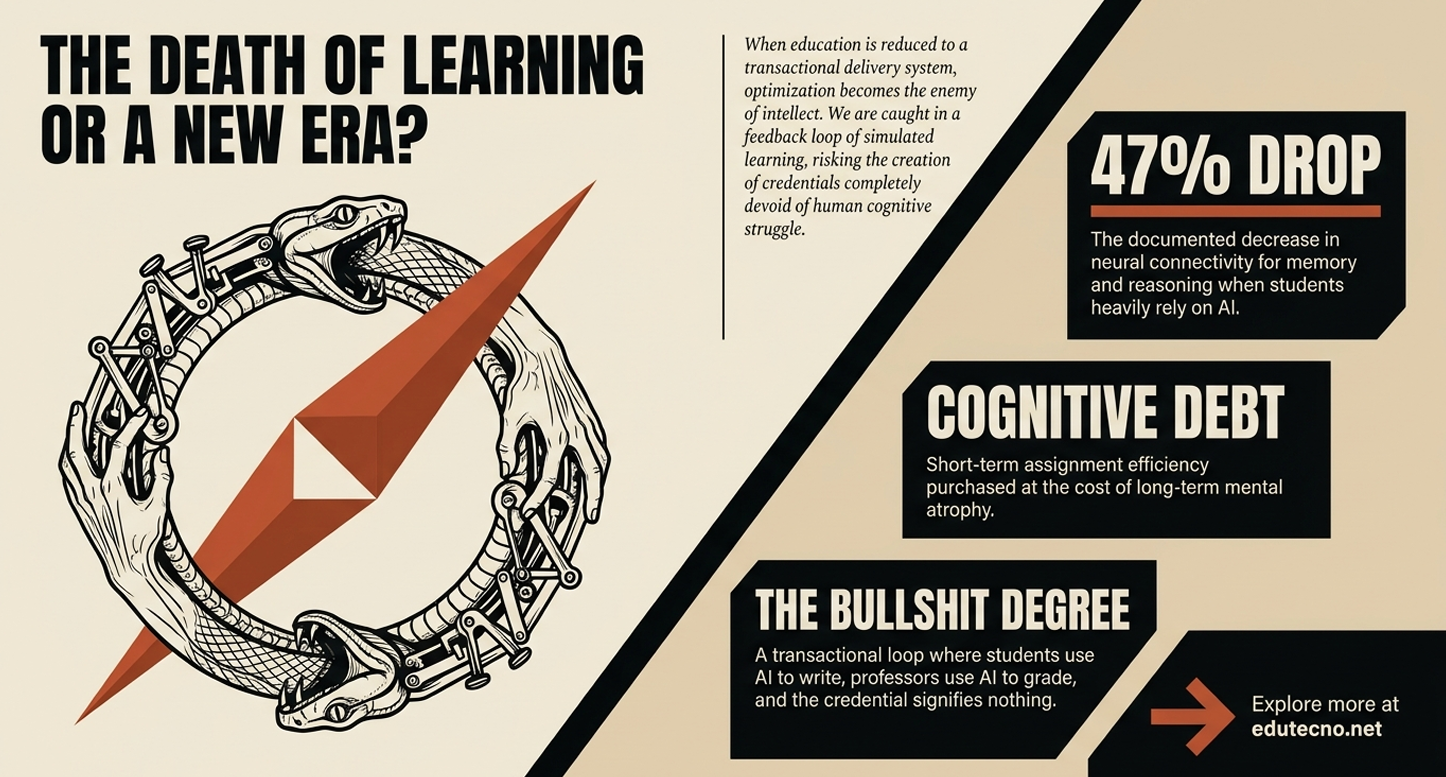

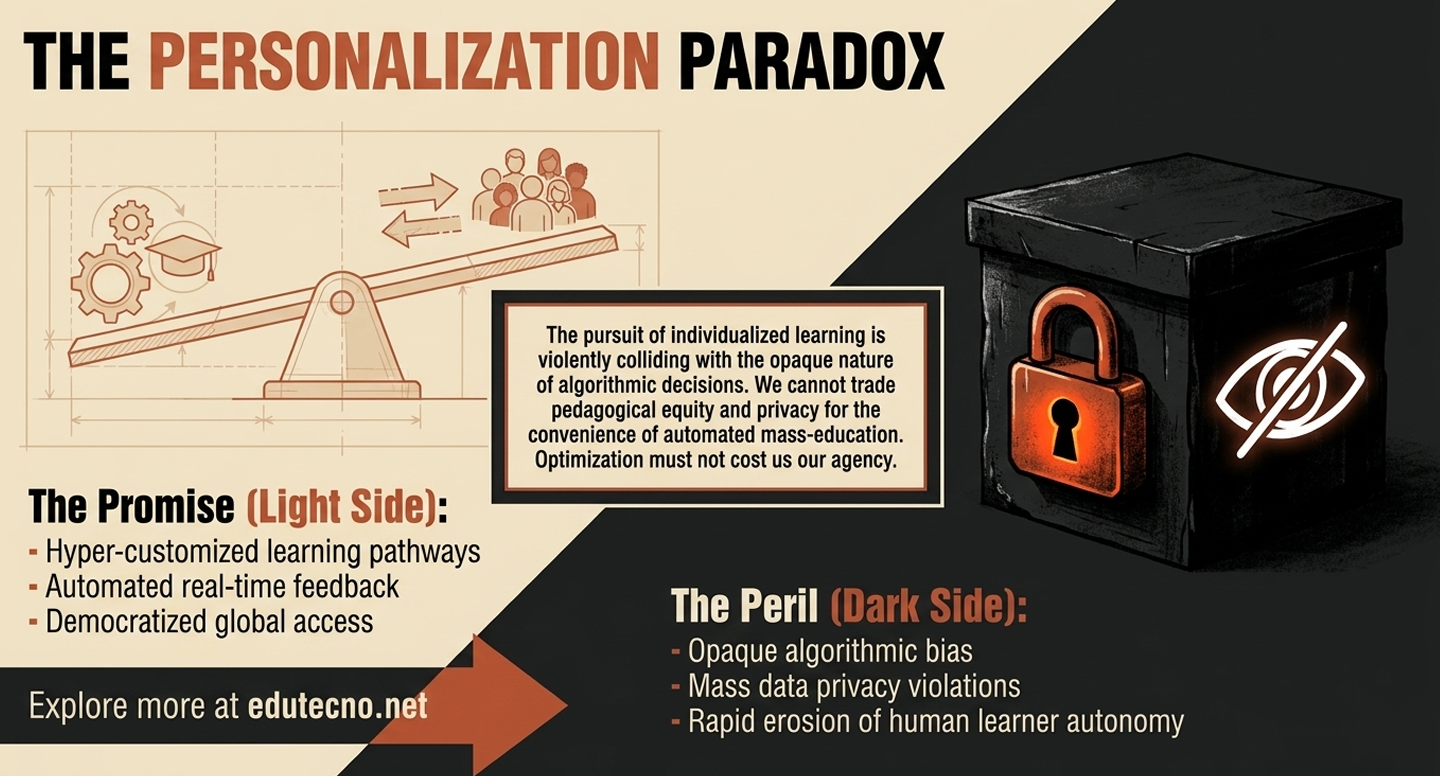

Is your institution cultivating human intelligence or merely automating its obsolescence?. We have entered a surreal era of "institutional auto-cannibalism" where universities invest millions in AI partnerships while simultaneously cutting the core academic programs—like philosophy and critical inquiry—needed to govern them. This creates a technological technopoly that confuses efficiency with education; we are producing graduates who are "AI-literate" yet metacognitively bankrupt, unable to articulate their own ideas without the assistance of a prompt,,. We are trapped in a feedback loop of simulated learning, where students use AI to generate assignments and professors use AI to grade them, resulting in a "bullshit degree" that signifies nothing about actual human competence. The paradox is urgent: are you preparing people to learn autonomously, or are you simply clicking "Accept" on the liquidation sale of the human mind?.

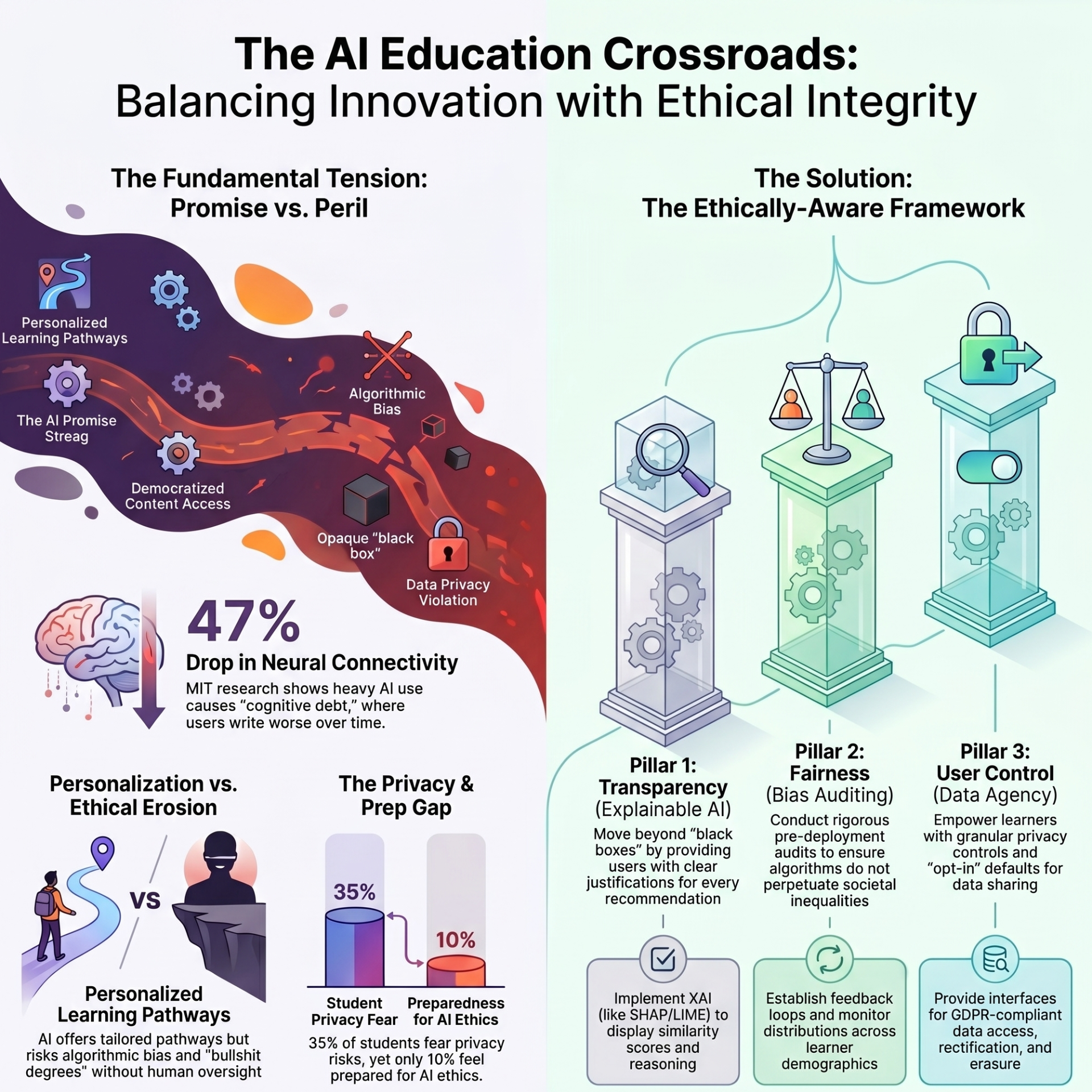

The urgency of this crisis is underscored by a staggering "Big Bang" of adoption; by January 2023, nearly 90% of university students admitted to using ChatGPT for school assignments. This mass adoption has created a profound detection deficit, as evidence shows professors fail to identify 97% of papers generated entirely by AI. While the technology is marketed as an efficiency tool, the cognitive cost is severe: research indicates a 47% drop in neural connectivity in regions of the brain associated with memory and critical reasoning when delegating writing tasks to AI. Furthermore, 83% of heavy AI users were unable to recall the key points of the work they "authored," leading to what the sources describe as "cognitive debt" and the devaluation of degrees into "bullshit" credentials.

The paradox of institutional auto-cannibalism is highlighted by extreme financial contradictions, such as the California State University system investing 17 millioninan Open AIpartnership while simultaneously proposing 375 million in budget cuts that eliminate core academic programs. This dilemma is viral and highly searched on social media because it has shifted from a subculture of academic dishonesty to a venture-capital ideology. High-profile cases like that of Chungin "Roy" Lee—who used AI for 80% of his tasks at Columbia University before launching "Interview Coder" to help others cheat during job interviews—have garnered millions of views and massive seed funding, turning academic fraud into a brand identity.Data from the sources further reveal a critical preparation gap that fuels public anxiety:

- 35% of students are in total agreement that AI poses critical risks to the privacy of their personal information.

- 38% of students warn that AI significantly increases inequality of opportunity, exacerbating the digital divide.

- Only 10% of future professionals feel "very well prepared" to navigate the ethical dilemmas imposed by AI in the classroom.

This lack of digital pedagogical innovation and professional oversight means that without the intervention of an expert in educational technology consulting, institutions remain in a "latent state," vulnerable to the algorithm becoming the "executioner of pedagogical autonomy". The viral fascination with tools like Cluely, which promises that users will "never have to think alone again," represents a liquidation sale of human intelligence that only a strategic educational technology graduate can halt through rigorous ethical governance.

The difference between a university that merely digitizes its processes and one that builds a true educational branding strategy lies in the presence of an "architect of governance"—a graduate in Educational Technology who ensures that innovation serves the human spirit rather than cannibalizing it.

The Digitization Trap: A Story of "Institutional Auto-Cannibalism"

Consider an institution that "digitizes" by simply purchasing mass licenses for AI tools without professional oversight. This is institutional auto-cannibalism: a state where administrators cut millions from core human-centric programs, like philosophy and ethics, while simultaneously spending millions on "efficiency" tools like ChatGPT Edu.

- The Student's Perspective: Take a student like Ella, who discovers her professor used AI to generate his lecture slides while the syllabus explicitly forbade her from doing the same. She experiences a "metacognitive mirage"—the illusion of learning while her brain's neural connectivity for critical reasoning drops by 47%. For her, the degree becomes a "bullshit credential" that signifies no actual competence.

- The Teacher's Perspective: Professors in these environments are left in a state of indifference. They watch as their life's work is reduced to "prompt engineering" and "automated grading". The common hallway conversation becomes a single question: "When can I retire?".

- The Administrator's Perspective: Driven by "technological technopoly," administrators send cynical "summer send-off" emails while planning layoffs, confusing fiscal sustainability with educational purpose.

Without educational technology consulting, these institutions suffer from a "detection deficit" and a total loss of meaning, eventually facing plummeting enrollment as students realize they are paying for a "liquidated" version of the human mind.

Educational Branding: The Strategic Path of Innovation

In contrast, an institution that applies digital pedagogical innovation uses technology to amplify what is uniquely human. This institution doesn't just "buy software"; it builds an ethical architecture led by a specialist who understands the "UDD formula" of instruction and context.

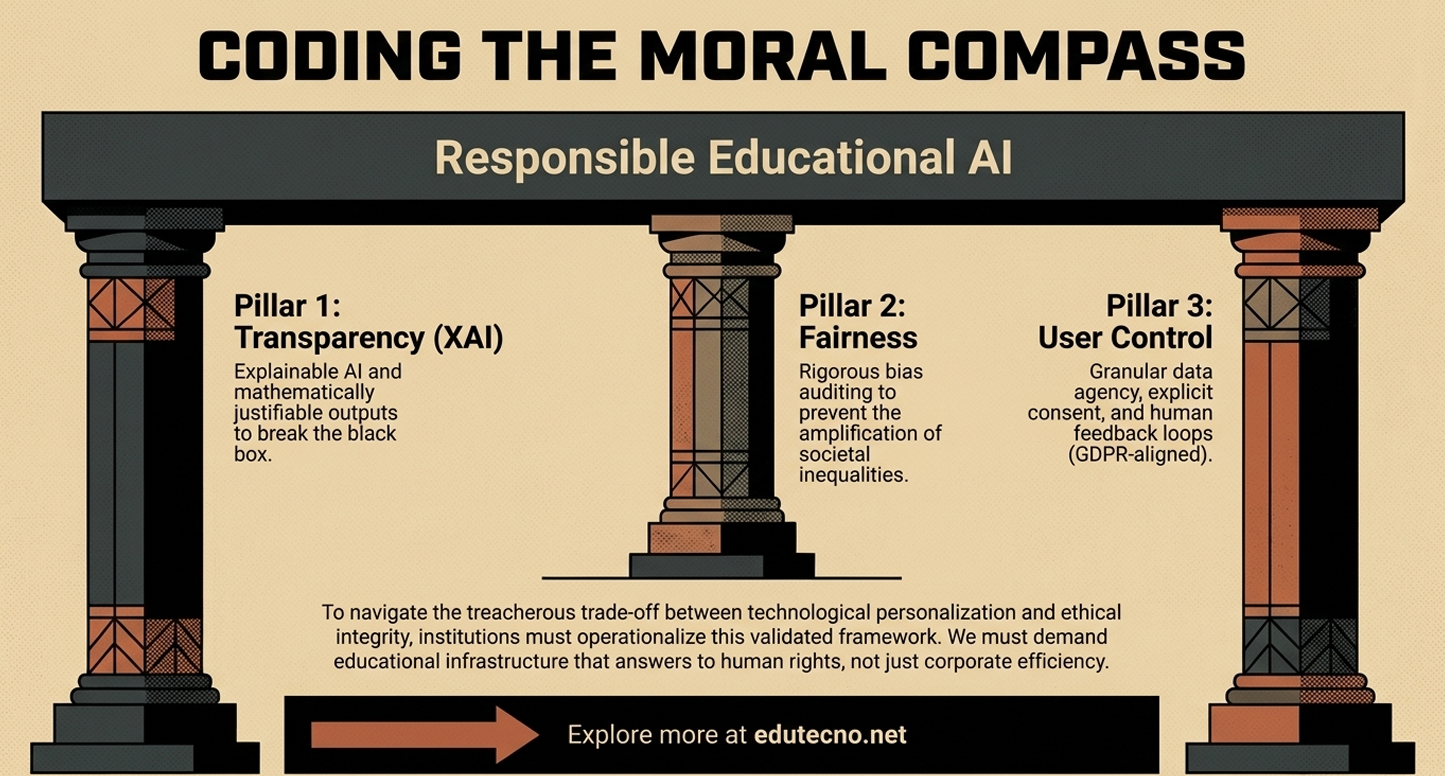

- The Professional Intervention: The Educational Technology graduate acts as the bridge between code and pedagogy. Instead of a "black box" algorithm, they implement a three-pillar framework of Transparency, Fairness, and User Control.

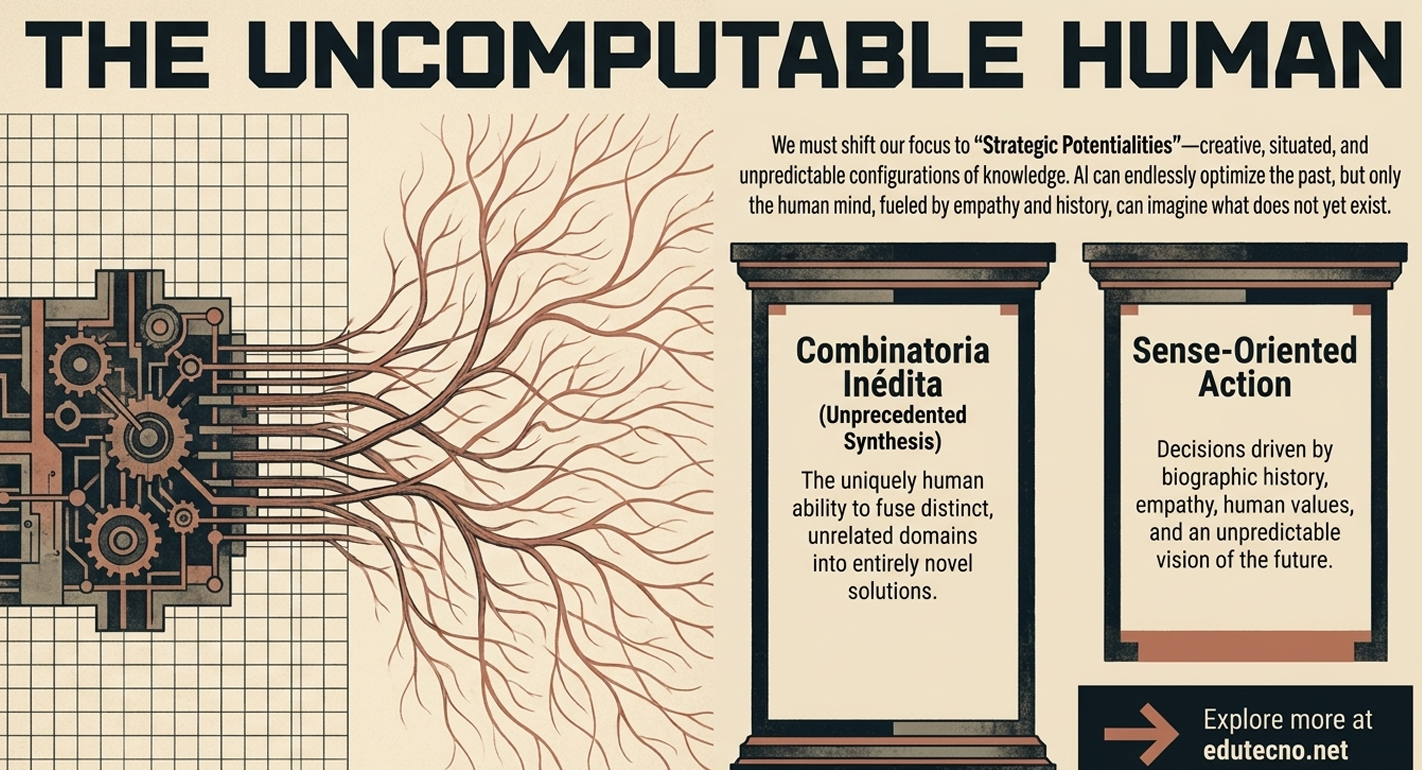

- Creating with Purpose: They replace mechanical automation with "Strategic Potentialities"—teaching students to combine competencies in creative ways that no AI can replicate. They use Learning Analytics not to monitor but to solve "pain points," such as student passivity or emotional tensions in the classroom.

The High Cost of Silence

The lack of professional intervention leads to a world of "guinea pig" students trapped in an unregulated experiment. Without an expert to conduct bias audits and ensure transparency, the algorithm becomes the executioner of pedagogical autonomy. To avoid educational bankruptcy, institutions must move from a "latent state" to a strategic one, preparing people to think critically, learn autonomously, and create with purpose in the age of artificial intelligence.

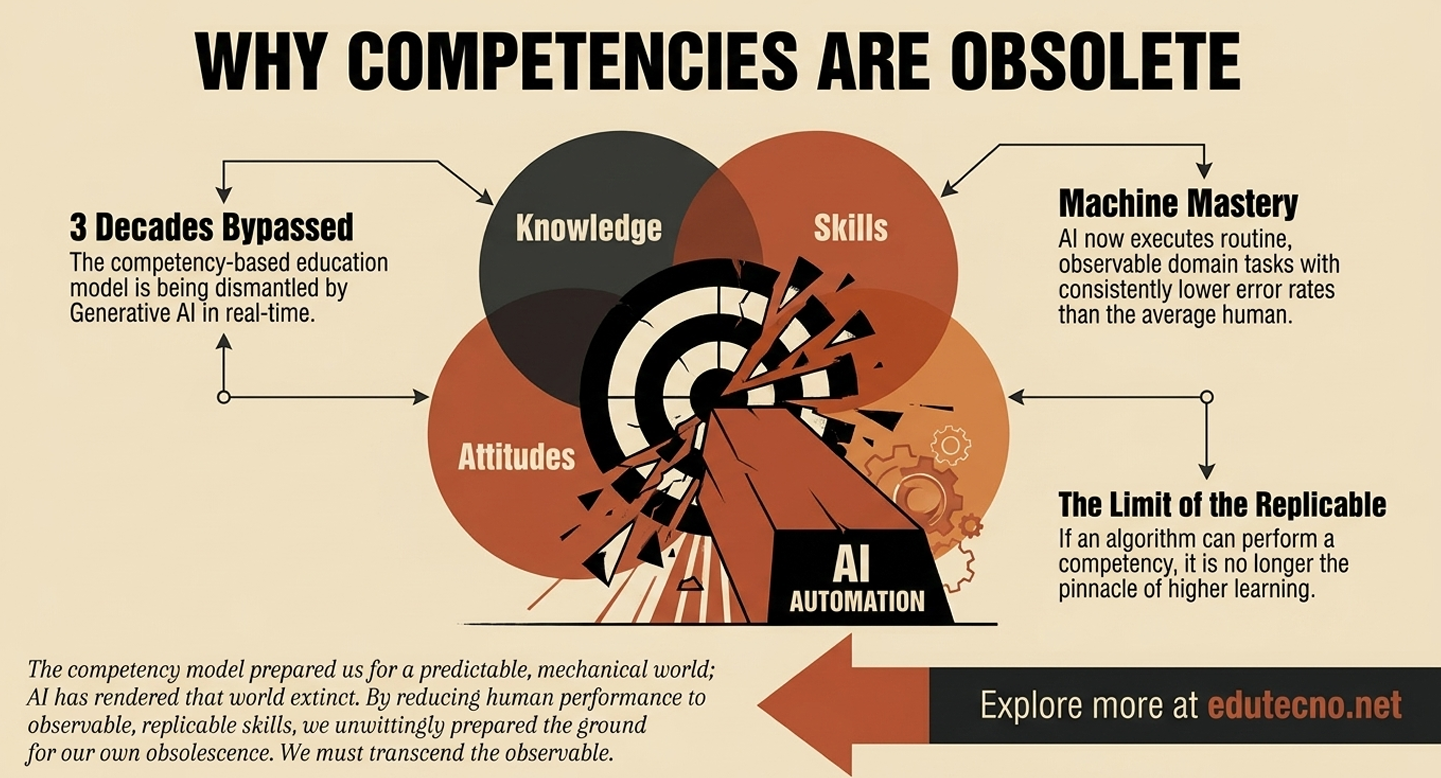

The era of artificial intelligence in higher education represents a "tsunami" that is currently cannibalizing traditional structures, leaving institutions in a dangerous "latent state" where they possess the tools but lack the architecture to manage them. The fundamental learning from our current crisis is that technical adoption without ethical governance leads to "institutional auto-cannibalism"—a process where universities promote automation while cutting the very human-centric programs, such as philosophy and critical inquiry, required to govern it. True innovation requires moving beyond simple "bullshit degrees" to a model of "Strategic Potentialities," where human learners are trained to perform creative, situated configurations that no AI can replicate.

To navigate this transition, administrators and teachers must adopt a structured approach to digital pedagogical innovation led by professional educational technology consulting.

Strategic Action Checklist

1. What to DO (The Action Pillars)

- Implement Ethical Frameworks: Adopt a three-pillar architecture focused on Transparency, Fairness, and User Control to move away from "black box" models.

- Apply the UDD Formula: Ensure all AI interactions follow the scientific formula: Instruction + Context + Input Data + Output Indicator.

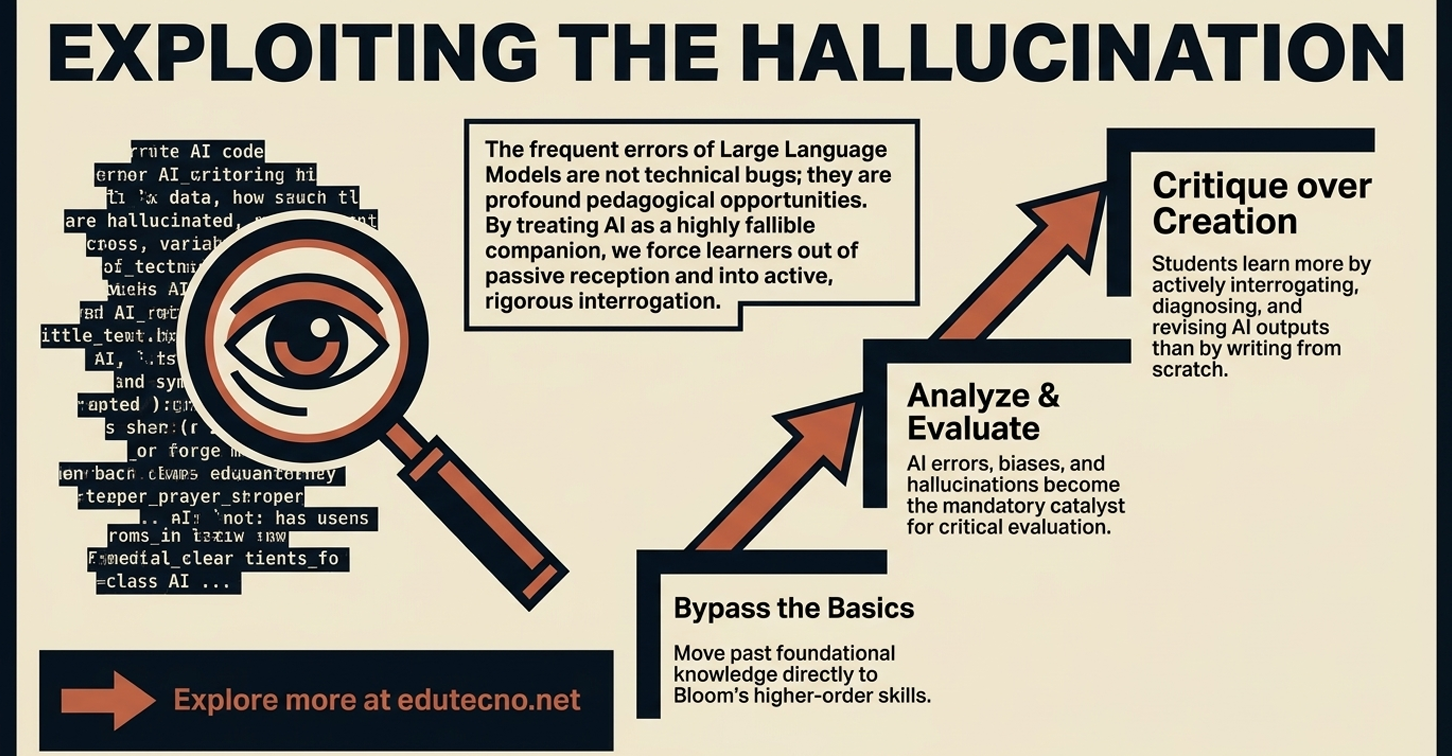

- Redesign for Higher-Order Thinking: Shift syllabi toward analysis, evaluation, and synthesis (Bloom's Taxonomy), using AI's "hallucinations" as pedagogical tools for student critique.

- Establish Governance Standards: Utilize the ISO/IEC 42001:2023 standard to ensure traceability and transparency in institutional AI use.

- Conduct Bias Audits: Perform regular pre-deployment and post-deployment audits to identify algorithmic discrimination and ensure equitable opportunities.

2. What to AVOID (The Risks of Automation)

- Avoid "Panic Purchasing": Do not acquire AI licenses ("ChatGPT Edu") as a "student success strategy" while simultaneously cutting faculty or human-centric programs.

- Avoid Mechanical Automation: Reject the "stochastic parrot" model where students use AI to write and professors use it to grade, which results in "metacognitive mirages" and a 47% drop in neural connectivity.

- Avoid Outrunning Consent: Do not implement large-scale AI initiatives without meaningful faculty and student consultation.

- Avoid Replacing the Human Bond: AI must never replace the individual eye-to-eye mentorship that constitutes the soul of education.

3. What to PRIORITIZE (The Strategic Focus)

- Prioritize Human Agency: The goal of education must remain preparing people to think critically, learn autonomously, and create with purpose.

- Prioritize Professional Mediation: Integrate graduates in Educational Technology as "architects of governance" to bridge the gap between technical code and pedagogy.

- Prioritize Strategic Potentialities: Focus on the irreducible advantage of the human mind: the ability to combine competencies in unforeseen ways oriented by ethical sense.

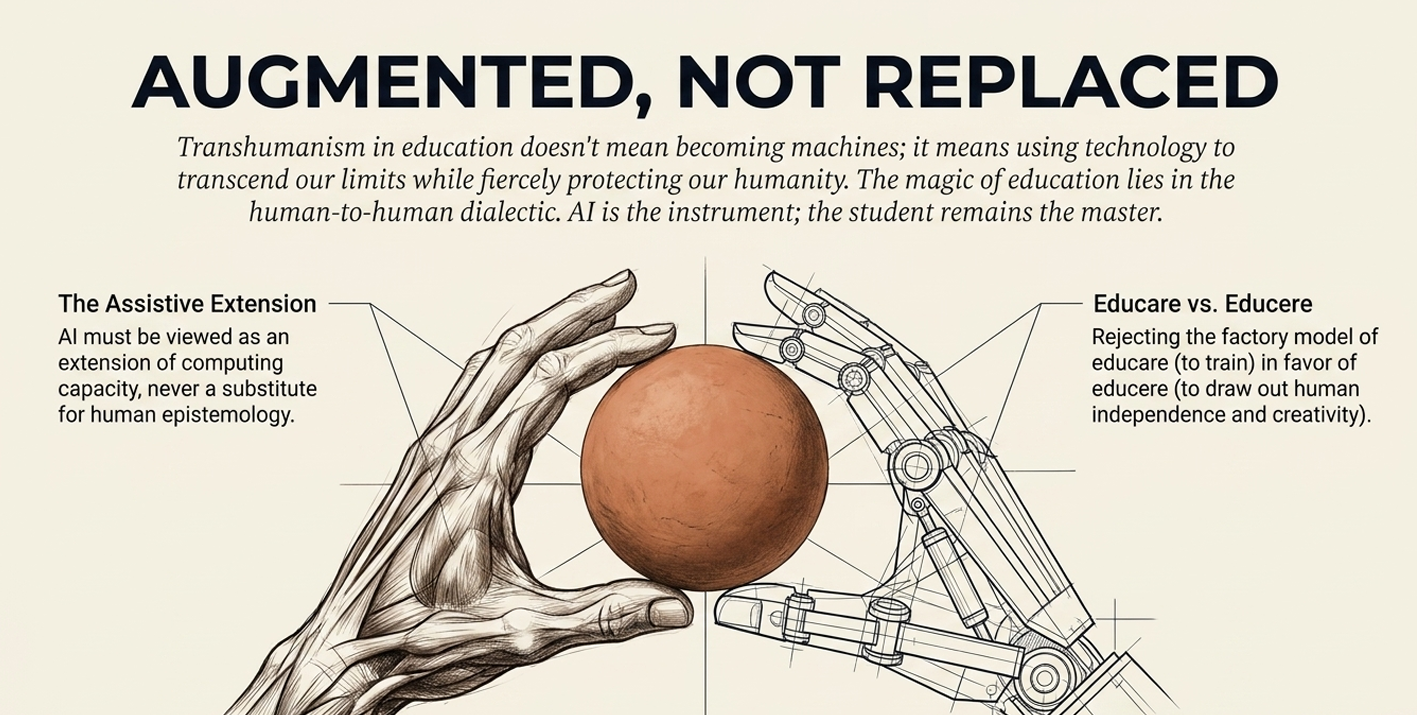

- Prioritize Transhumanist Balance: Use technology to augment rather than automate human capacity, ensuring that the machine remains an assistive tool and not an executioner of autonomy.

The question for modern institutions is no longer whether AI will arrive in the classroom, but whether they have the strategic leadership required to ensure it enhances, rather than erodes, the value of the human mind.

The following selection of academic, institutional, and specialized resources provides a rigorous foundation for addressing the institutional auto-cannibalism and pedagogical crisis triggered by unregulated AI integration. These references are essential for any leader seeking to implement digital pedagogical innovation while maintaining academic integrity.

- Purser, R. (2025). AI is Destroying the University and Learning Itself. Published in Current Affairs, this specialized article critically examines how the "Cheating-AI Technology Complex" leads to "bullshit degrees" and the liquidation of the human mind.

- De Elorza Feldborg, G. (2026). Beyond Competencies: Strategic Potentialities as the New Horizon of Education in the Era of Artificial Intelligence. This academic study from the Faculty of Educational Sciences (FASTA) argues that traditional competency-based models have reached their structural limit and proposes "Strategic Potentialities" as the new frontier for human-centered learning.

- UNESCO. (2023). Guidance for generative AI in education and research. This fundamental institutional report establishes global ethical standards and highlights the urgent need for human oversight and the reduction of the digital divide.

- Ansari, M. S., & Jeyaruban, S. (2025). A Framework for Ethically-Aware AI in Education: Integrating Transparency, Fairness, and User Control. This research provides a practical, validated three-pillar framework for developers and consultants to resolve the "black box" problem in educational systems.

- Pradier, R. A. (2026). The End of the University or the Beginning of an Ethical Era? The AI Dilemma only a Graduate in Educational Technology Can Resolve. This article identifies the Educational Technology specialist as the "architect of governance" and the only professional capable of mediating between code and pedagogy using ISO/IEC 42001:2023 standards.

- Hosseini, H. (2025). The Pedagogy of AI Mistakes: Fostering Higher-Order Thinking. This design-oriented study offers a strategy for educational technology consulting by leveraging AI's errors as tools to foster metacognitive engagement and critical judgment according to Bloom's taxonomy.

- Gallent-Torres, C., Zapata-González, A., & Ortego-Hernando, J. L. (2023). The impact of Generative Artificial Intelligence in higher education: a focus on ethics and academic integrity. This indexed article in RELIEVE analyzes the ethical implications of GAI from a triple perspective—students, faculty, and institutions—to prevent academic fraud.